Practice: Chp. 15#

The answers to the questions in this chapter were originally developed with rstan, but running all

the cells at once would consistently produce a crash (greater than half the time). The error was

similar to:

*** caught segfault ***

address 0x7fd4069fa9d0, cause 'memory not mapped'

An irrecoverable exception occurred. R is aborting now ...

Stan developers advise moving to CmdStan to avoid these crashes:

Installation instructions for cmdstan and cmdstanr:

This environment includes cmdstan in conda:

library(cmdstanr)

set_cmdstan_path("/opt/conda/bin/cmdstan")

This is cmdstanr version 0.4.0

- Online documentation and vignettes at mc-stan.org/cmdstanr

- Use set_cmdstan_path() to set the path to CmdStan

- Use install_cmdstan() to install CmdStan

CmdStan path set to: /opt/conda/bin/cmdstan

After switching to CmdStan, the crashes completely went away. For more details on the process, see the commits near this change. The warning messages you see in this chapter may look otherwise unfamiliar.

source("iplot.R")

suppressPackageStartupMessages(library(rethinking))

15E1. Rewrite the Oceanic tools model (from Chapter 11) below so that it assumes measured error on the log population sizes of each society. You don’t need to fit the model to data. Just modify the mathematical formula below.

Answer. Assuming a \(P_{SE,i}\) is available, though it is not in the data:

15E2. Rewrite the same model so that it allows imputation of missing values for log population. There aren’t any missing values in the variable, but you can still write down a model formula that would imply imputation, if any values were missing.

Answer. As a challenge we’ll add this to model that also considers measurement error, assuming either both the observed value and the measurement are present, or neither. The priors could be much better:

15M1. Using the mathematical form of the imputation model in the chapter, explain what is being assumed about how the missing values were generated.

ERROR. It’s not clear what the author means by ‘the imputation model’; there are several models in the text.

Answer. In section 15.2.2. the first imputation model assumes a normal distribution for brain sizes. This is somewhat unreasonable, as explained in the text, because these values should be bounded between zero and one.

In the second model for this primate example we still assume a normal distribution, but now correlated with body mass.

15M2. Reconsider the primate milk missing data example from the chapter. This time, assign \(B\) a distribution that is properly bounded between zero and 1. A beta distribution, for example, is a good choice.

Answer. This question requires a start= list to run at all, which isn’t suggested for these

imputation models until a later question. We use the dbeta2 parameterization provided by ulam to

make the parameters a bit more understandable (as opposed to dbeta). Fitting the model:

data(milk)

d <- milk

d$neocortex.prop <- d$neocortex.perc / 100

d$logmass <- log(d$mass)

stdB = standardize(d$neocortex.prop)

dat_list <- list(

K = standardize(d$kcal.per.g),

B = d$neocortex.prop,

M = standardize(d$logmass),

center = attr(stdB, "scaled:center"),

scale = attr(stdB, "scaled:scale")

)

m_milk_beta <- ulam(

alist(

K ~ dnorm(mu, sigma),

mu <- a + bB*B + bM*M,

B ~ dbeta2(p, theta),

a ~ dnorm(0, 0.5),

c(bB, bM) ~ dnorm(0, 0.5),

p ~ dunif(0, 1),

theta ~ dunif(0, 1000),

sigma ~ dexp(1)

), cmdstan=TRUE, data=dat_list, chains=4, cores=4, start=list(B_impute=rep(0.7,12))

)

Found 12 NA values in B and attempting imputation.

Without bounds on the parameters to the beta distribution, this model produces a significant number

of divergent transitions. To avoid these errors, we are using uniform priors on the two parameters

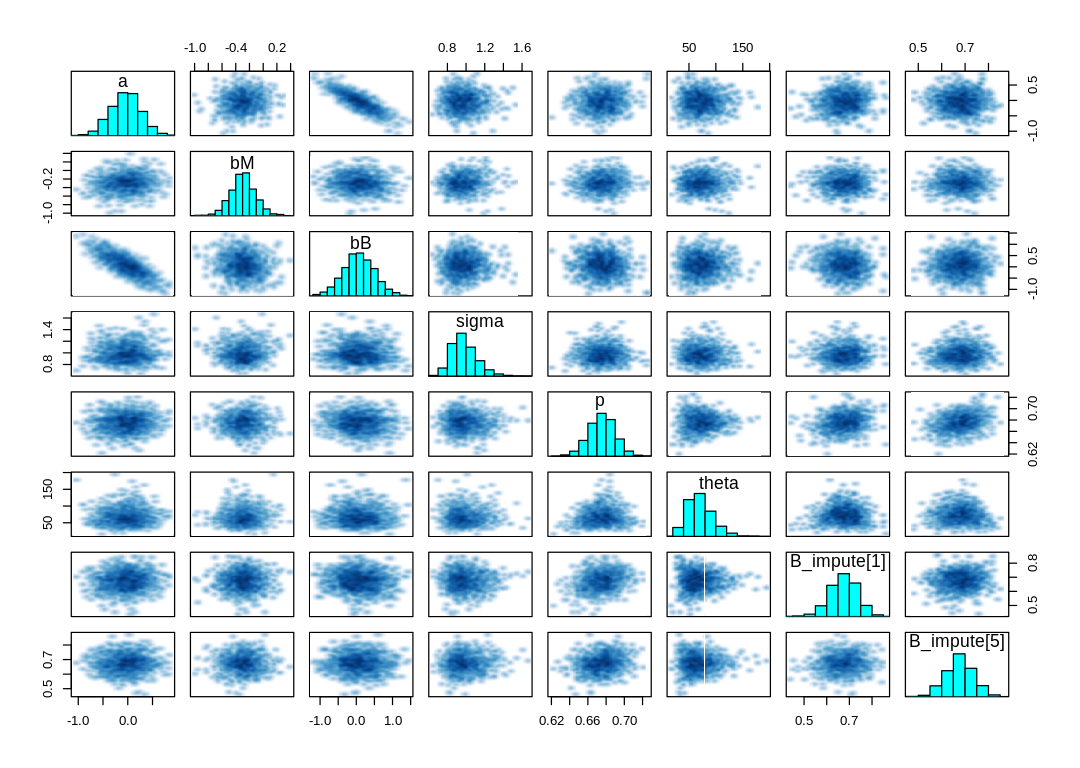

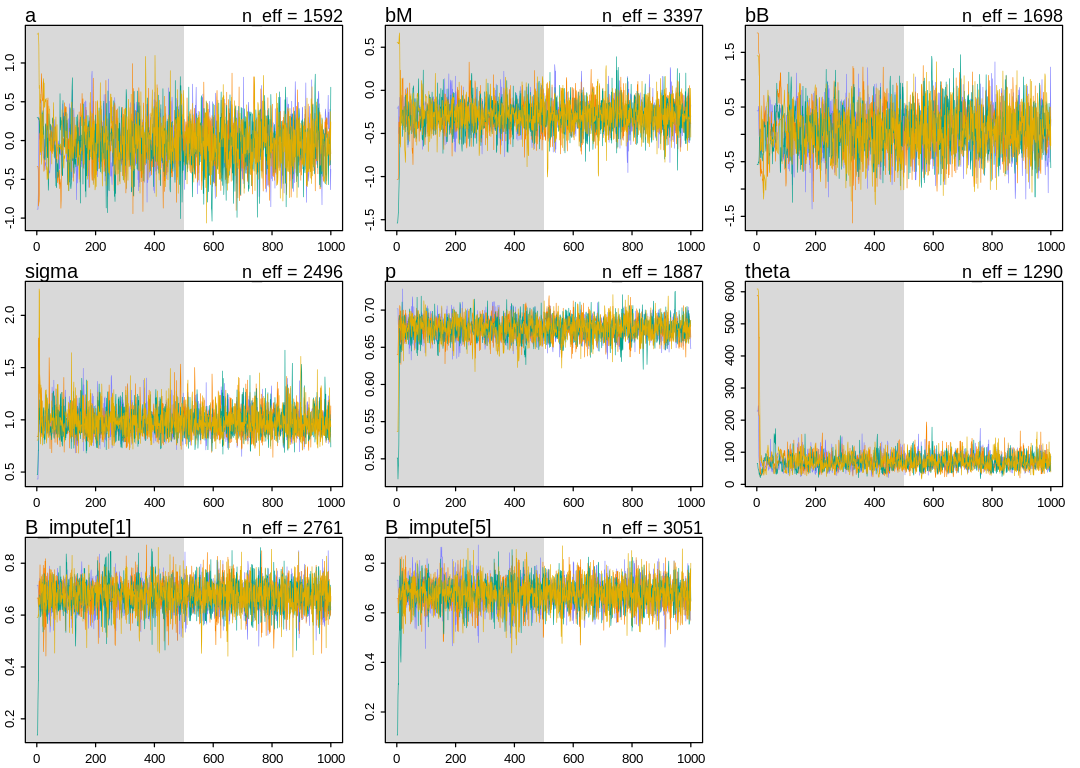

to the beta distribution. Checking the pairs and traceplot:

sel_pars=c("a", "bM", "bB", "sigma", "p", "theta", "B_impute[1]", "B_impute[5]")

iplot(function() {

pairs(m_milk_beta@stanfit, pars=sel_pars)

})

iplot(function() {

traceplot(m_milk_beta, pars=sel_pars)

})

[1] 1000

[1] 1

[1] 1000

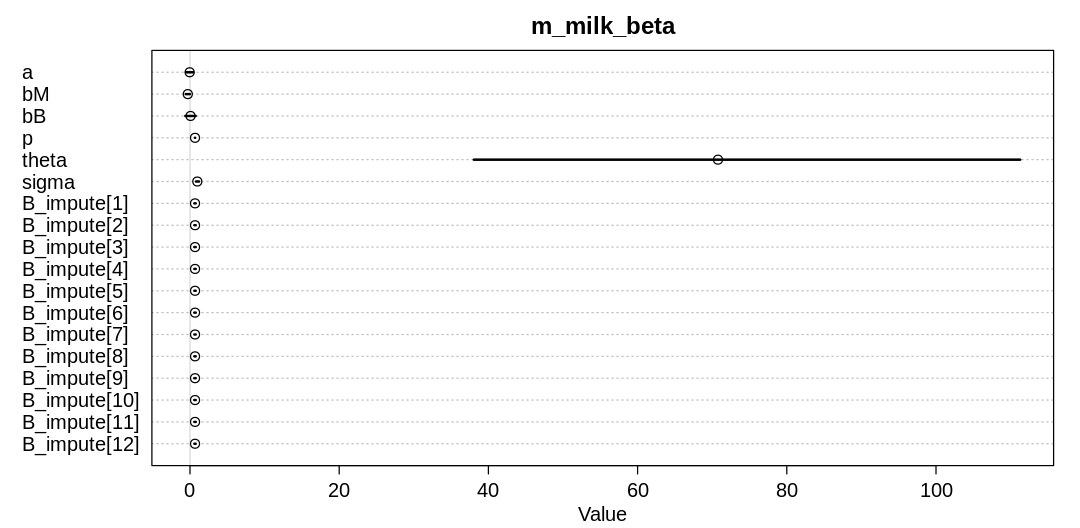

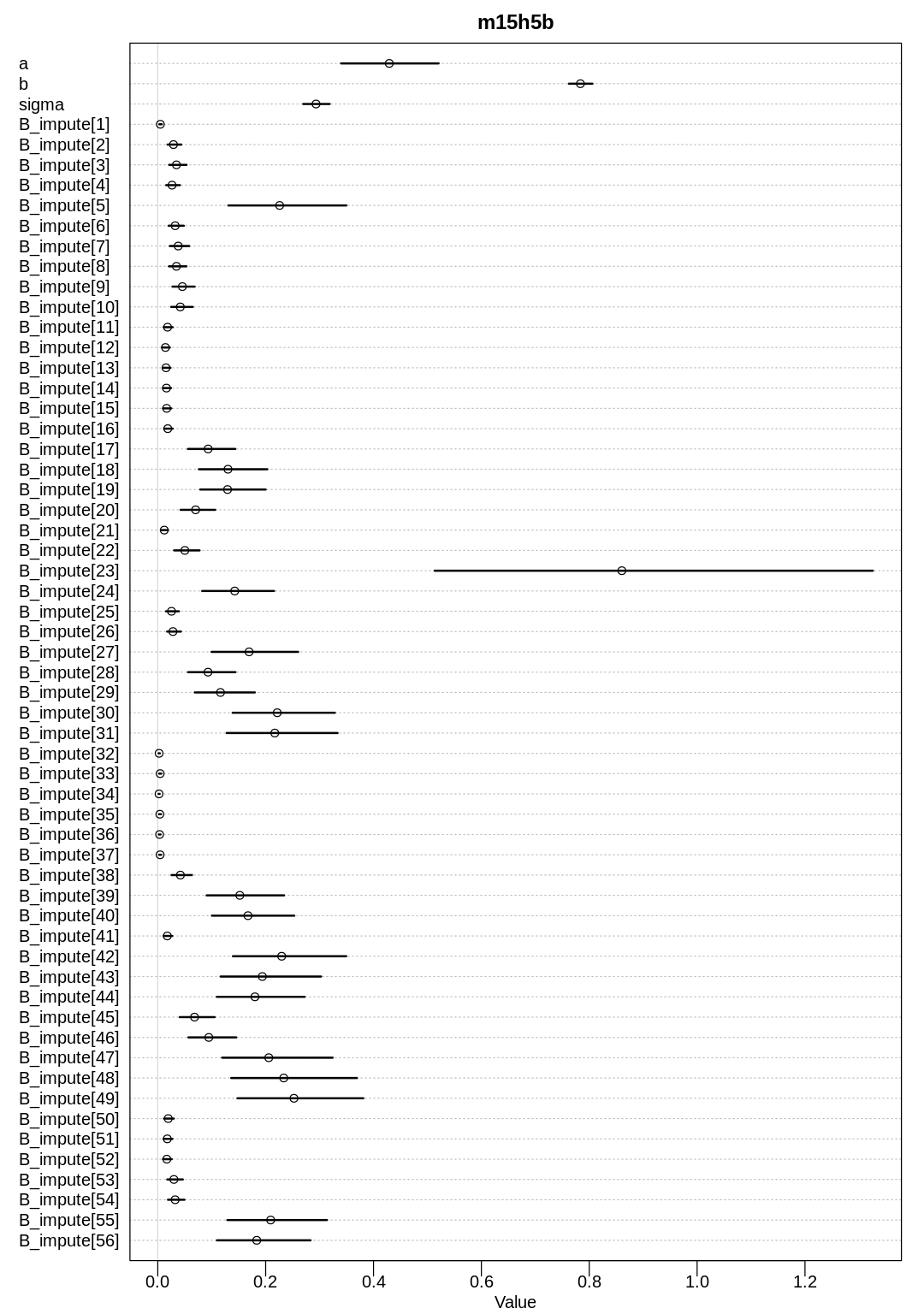

The precis results:

display(precis(m_milk_beta, depth=3), mimetypes="text/plain")

iplot(function() {

plot(precis(m_milk_beta, depth=3), main="m_milk_beta")

}, ar=2)

mean sd 5.5% 94.5% n_eff

a -0.04295665 0.30361899 -0.5376136 0.426910758 1592.433

bM -0.29418840 0.18302593 -0.5798064 -0.002633437 3397.419

bB 0.07365296 0.43005402 -0.6299967 0.762377178 1698.291

p 0.67533351 0.01442545 0.6522330 0.697699699 1886.658

theta 70.77433602 23.50242292 38.0426798 111.287303705 1289.928

sigma 0.98056955 0.13683458 0.7918979 1.216318996 2495.705

B_impute[1] 0.67454671 0.06042883 0.5781118 0.766966686 2760.941

B_impute[2] 0.67520062 0.05694163 0.5842362 0.764997764 3034.462

B_impute[3] 0.67567915 0.05998694 0.5766410 0.770490172 3037.513

B_impute[4] 0.67604852 0.05811344 0.5790328 0.765995901 3214.573

B_impute[5] 0.67626441 0.05852606 0.5807795 0.768901601 3051.055

B_impute[6] 0.67604027 0.06213170 0.5747488 0.767945883 2282.398

B_impute[7] 0.67492820 0.05825896 0.5816310 0.766487154 3311.031

B_impute[8] 0.67587230 0.05888821 0.5780833 0.765306819 3140.502

B_impute[9] 0.67528655 0.05869325 0.5753336 0.765554759 3158.336

B_impute[10] 0.67563438 0.06162871 0.5714415 0.768452190 2902.297

B_impute[11] 0.67530152 0.06010048 0.5779499 0.768968719 2813.118

B_impute[12] 0.67451433 0.05874663 0.5801504 0.764538005 2611.613

Rhat4

a 1.0015286

bM 0.9990618

bB 1.0027464

p 0.9999588

theta 0.9993599

sigma 0.9997023

B_impute[1] 0.9989987

B_impute[2] 0.9991325

B_impute[3] 0.9991644

B_impute[4] 0.9992428

B_impute[5] 0.9994196

B_impute[6] 0.9990582

B_impute[7] 0.9990442

B_impute[8] 0.9986711

B_impute[9] 0.9994715

B_impute[10] 1.0000818

B_impute[11] 1.0001818

B_impute[12] 0.9988374

The theta parameter to the beta distribution is highly uncertain because there are simply too many

values that are compatible with these observations. The imputed values are essentially nothing more

than the mean of the non-NA observations of brain size. That is, any gentle tilt of the imputed

values we’d expect from using the observed mass seems to have disappeared.

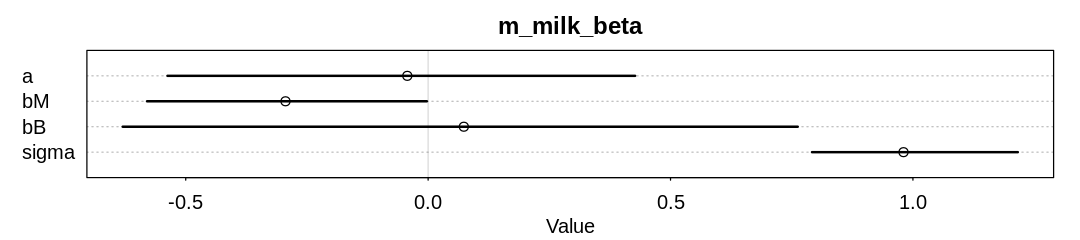

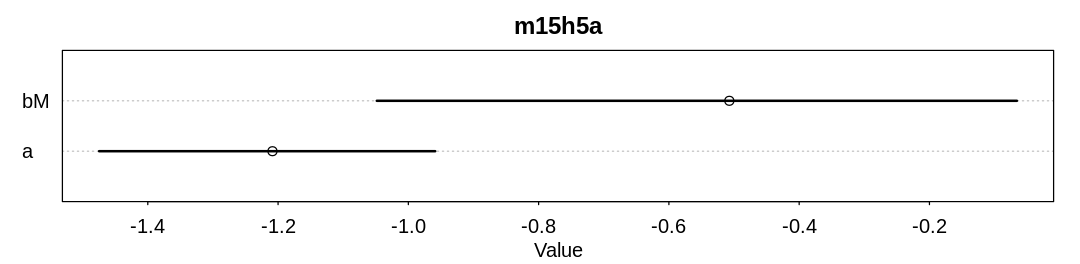

Let’s zoom in on some parameters of interest:

iplot(function() {

plot(precis(m_milk_beta, depth=3), main="m_milk_beta", pars=c("a", "bM", "bB", "sigma"))

}, ar=4.5)

Compare these results to the figure produced from R code 15.20 in the chapter. The inference for

bM has shrunk even further. It’s likely bB has shrunk as well, though in this new model bB is

multiplied by a probability rather than a standardized value so we can expect it to be smaller.

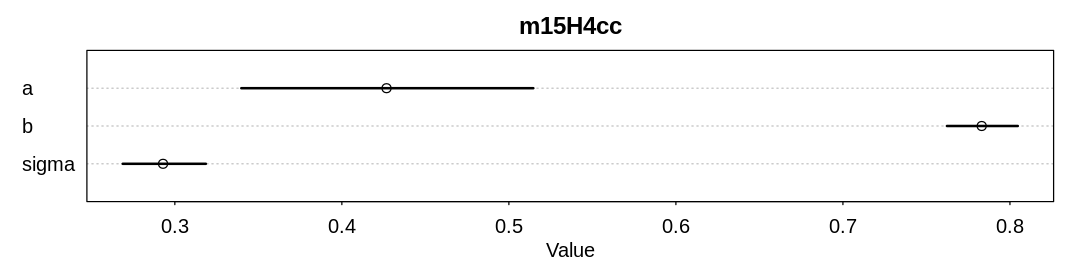

15M3. Repeat the divorce data measurement error models, but this time double the standard errors. Can you explain how doubling the standard errors impacts inference?

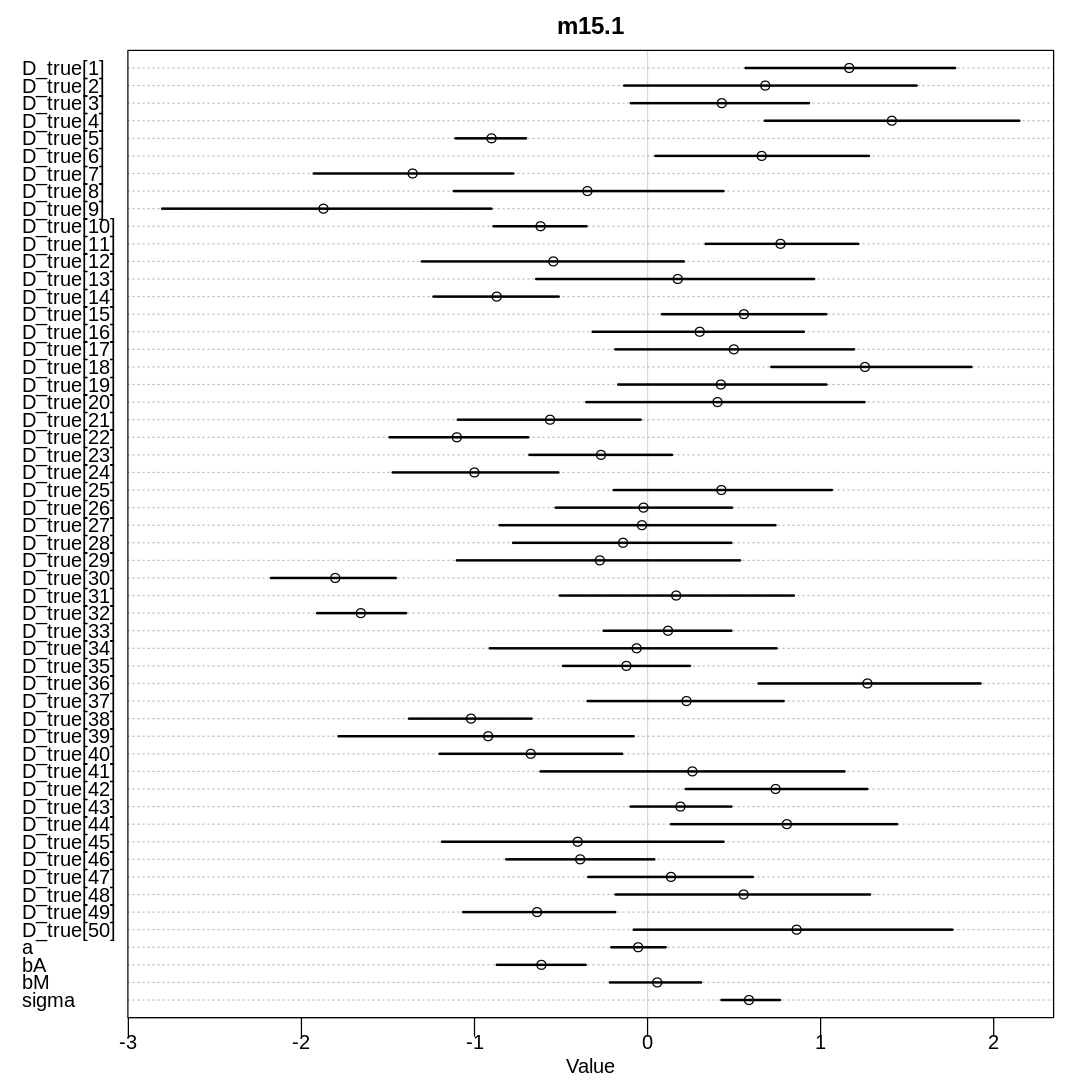

Answer. Let’s start by reproducing the results from the chapter, for the sake of comparison:

data(WaffleDivorce)

d <- WaffleDivorce

dlist <- list(

D_obs = standardize(d$Divorce),

D_sd = d$Divorce.SE / sd(d$Divorce),

M = standardize(d$Marriage),

A = standardize(d$MedianAgeMarriage),

N = nrow(d)

)

m15.1 <- ulam(

alist(

D_obs ~ dnorm(D_true, D_sd),

vector[N]:D_true ~ dnorm(mu, sigma),

mu <- a + bA * A + bM * M,

a ~ dnorm(0, 0.2),

bA ~ dnorm(0, 0.5),

bM ~ dnorm(0, 0.5),

sigma ~ dexp(1)

),

cmdstan = TRUE, data = dlist, chains = 4, cores = 4

)

display(precis(m15.1, depth=3), mimetypes="text/plain")

iplot(function() {

plot(precis(m15.1, depth=3), main="m15.1")

}, ar=1)

dlist <- list(

D_obs = standardize(d$Divorce),

D_sd = d$Divorce.SE / sd(d$Divorce),

M_obs = standardize(d$Marriage),

M_sd = d$Marriage.SE / sd(d$Marriage),

A = standardize(d$MedianAgeMarriage),

N = nrow(d)

)

m15.2 <- ulam(

alist(

D_obs ~ dnorm(D_true, D_sd),

vector[N]:D_true ~ dnorm(mu, sigma),

mu <- a + bA * A + bM * M_true[i],

M_obs ~ dnorm(M_true, M_sd),

vector[N]:M_true ~ dnorm(0, 1),

a ~ dnorm(0, 0.2),

bA ~ dnorm(0, 0.5),

bM ~ dnorm(0, 0.5),

sigma ~ dexp(1)

),

cmdstan = TRUE, data = dlist, chains = 4, cores = 4

)

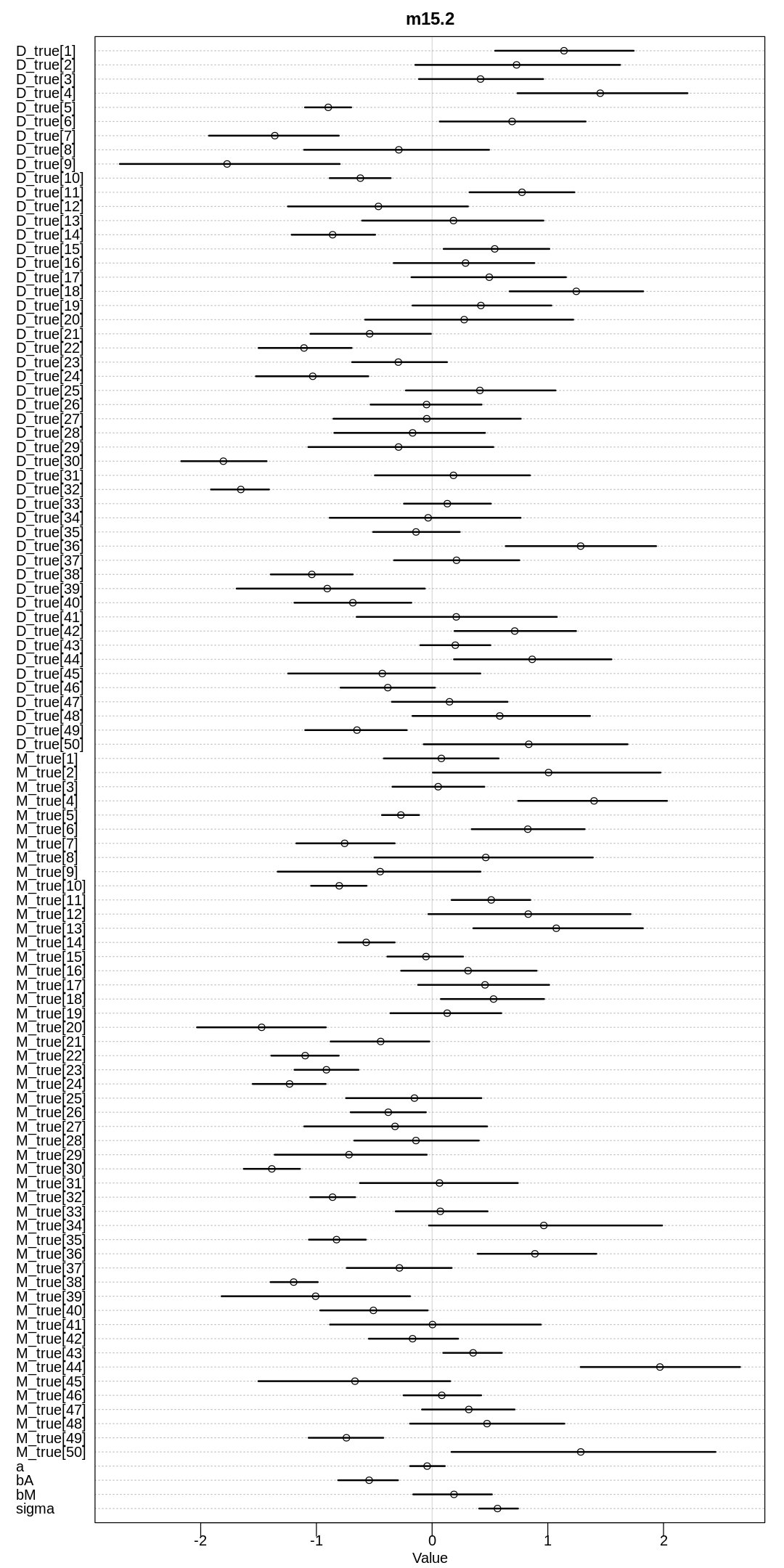

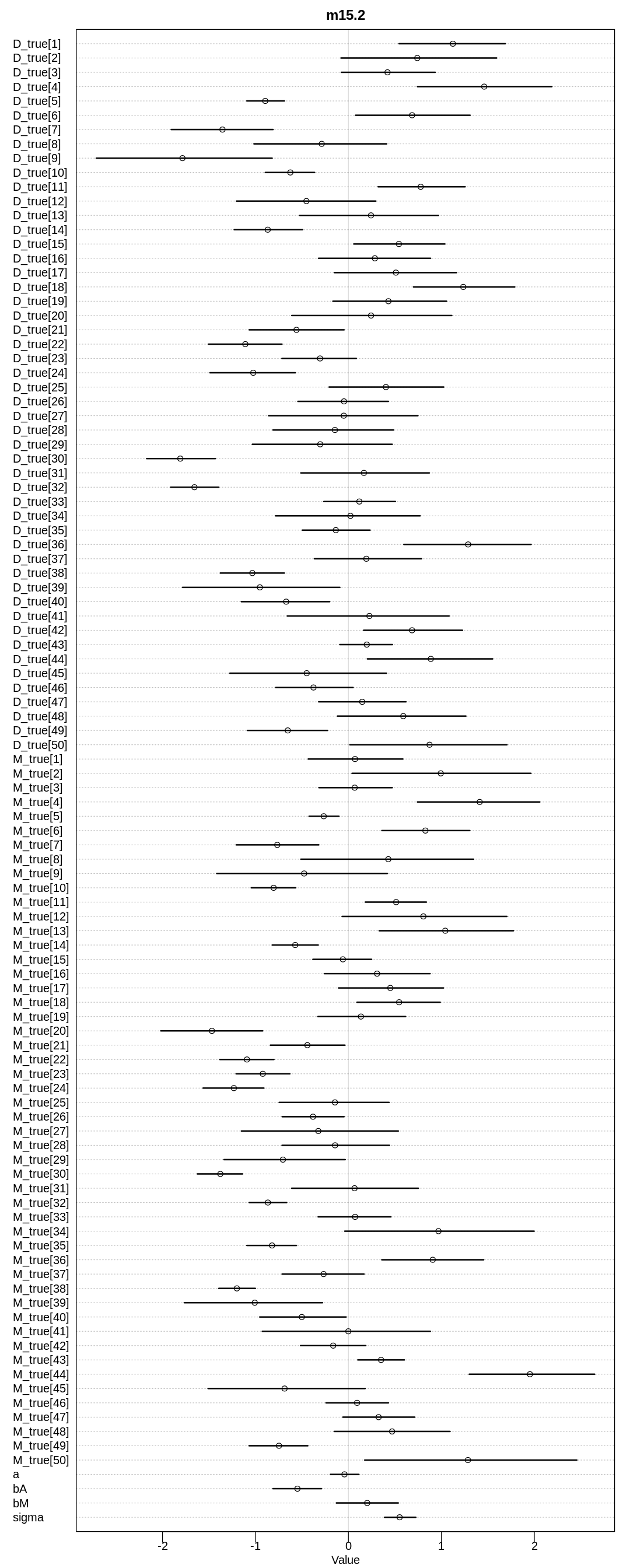

display(precis(m15.2, depth=3), mimetypes="text/plain")

iplot(function() {

plot(precis(m15.2, depth=3), main="m15.2")

}, ar=0.5)

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 1 finished in 0.2 seconds.

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 finished in 0.2 seconds.

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 3 finished in 0.2 seconds.

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 finished in 0.3 seconds.

All 4 chains finished successfully.

Mean chain execution time: 0.2 seconds.

Total execution time: 0.5 seconds.

mean sd 5.5% 94.5% n_eff Rhat4

D_true[1] 1.16529913 0.37670085 0.56646235 1.77686420 1826.9479 0.9997720

D_true[2] 0.68035140 0.53713821 -0.13498373 1.55454040 1763.5176 1.0022227

D_true[3] 0.42872481 0.33167476 -0.09605938 0.93261400 2683.5404 0.9995092

D_true[4] 1.41154159 0.46577940 0.67784049 2.14802785 2554.3427 0.9985109

D_true[5] -0.90160861 0.12550591 -1.10984500 -0.70248119 2592.0895 0.9990946

D_true[6] 0.65953298 0.39147481 0.04538031 1.27896865 2126.2524 1.0005978

D_true[7] -1.35749882 0.35406903 -1.92839990 -0.77569350 1818.2683 0.9992590

D_true[8] -0.34753309 0.48870640 -1.11989465 0.43857377 1775.4601 1.0015430

D_true[9] -1.87285832 0.60396528 -2.80459145 -0.90207235 1459.0806 1.0019322

D_true[10] -0.61835239 0.16858058 -0.89032025 -0.35274012 2657.2032 1.0005894

D_true[11] 0.76812978 0.27716226 0.33565835 1.21754440 2329.5430 0.9991595

D_true[12] -0.54472513 0.47509818 -1.30452415 0.20983636 1811.4371 1.0012212

D_true[13] 0.17434182 0.50165467 -0.64344098 0.96242766 1080.0249 1.0033488

D_true[14] -0.87181479 0.22336972 -1.23705925 -0.51341138 2245.2291 0.9999715

D_true[15] 0.55659024 0.30202896 0.08249217 1.03231220 2200.4551 0.9999940

D_true[16] 0.30132522 0.38326632 -0.31731629 0.90287237 2868.8095 0.9994837

D_true[17] 0.49857479 0.43252705 -0.18628874 1.19296880 3231.2556 0.9989415

D_true[18] 1.25611387 0.35837104 0.71500242 1.87129685 2111.6199 1.0001413

D_true[19] 0.42369517 0.38116782 -0.16956035 1.03324940 2269.2815 0.9993208

D_true[20] 0.40433882 0.51297364 -0.35406454 1.25353315 1176.9675 1.0014683

D_true[21] -0.56355219 0.33233896 -1.09647050 -0.04003655 2360.7146 0.9996213

D_true[22] -1.10207486 0.25450122 -1.49067225 -0.68943755 2347.1546 1.0005273

D_true[23] -0.26902316 0.26278468 -0.68275595 0.14115404 2774.6269 1.0001873

D_true[24] -1.00045957 0.29883294 -1.47296970 -0.51553198 1730.4090 1.0007305

D_true[25] 0.42674263 0.40008865 -0.19583232 1.06478540 2663.3554 0.9993704

D_true[26] -0.02343933 0.32142629 -0.53034520 0.48795575 2133.5721 1.0029464

D_true[27] -0.03251814 0.49720989 -0.85495812 0.73914262 2200.8593 0.9999593

D_true[28] -0.14182911 0.39517413 -0.77690007 0.48404311 2124.0202 1.0000422

D_true[29] -0.27579654 0.50811564 -1.10165660 0.53418449 2467.4737 0.9991335

D_true[30] -1.80465810 0.23055456 -2.17609660 -1.45517780 2087.2871 1.0008965

D_true[31] 0.16544667 0.43112034 -0.50715942 0.84543276 2966.5765 0.9993029

D_true[32] -1.65694118 0.16025453 -1.90951420 -1.39546590 2298.8496 1.0002954

D_true[33] 0.11802736 0.23494846 -0.25410918 0.48409419 2850.4477 1.0001158

D_true[34] -0.06339266 0.51499171 -0.91162970 0.74493650 1419.7932 1.0014848

D_true[35] -0.12244562 0.22985512 -0.48932804 0.24497560 2508.5167 0.9996304

D_true[36] 1.27047247 0.40131977 0.64126050 1.92421385 2318.8039 0.9998624

D_true[37] 0.22497356 0.35980983 -0.34656786 0.78629118 2595.7440 0.9991507

D_true[38] -1.02029160 0.22501755 -1.37862575 -0.67071761 2704.1224 0.9993599

D_true[39] -0.92104471 0.53912631 -1.78547790 -0.08014792 1824.4961 0.9995028

D_true[40] -0.67496281 0.33746049 -1.20182360 -0.14724809 2433.9734 1.0004294

D_true[41] 0.25872653 0.54692102 -0.61817572 1.13789520 2040.4956 0.9988532

D_true[42] 0.73961065 0.33014936 0.22117780 1.26946220 2414.9662 0.9990285

D_true[43] 0.18995112 0.18223568 -0.09803334 0.48426666 2414.6235 1.0000140

D_true[44] 0.80465016 0.41707592 0.13549761 1.44203035 1351.6125 1.0031868

D_true[45] -0.40386974 0.51067738 -1.18794350 0.43917189 1909.1625 0.9988625

D_true[46] -0.38938278 0.26783573 -0.81655354 0.03900730 2385.6420 0.9999969

D_true[47] 0.13534855 0.29424111 -0.34327577 0.60873387 2989.7362 0.9993935

D_true[48] 0.55514460 0.46916305 -0.18476896 1.28536950 3325.5506 0.9983973

D_true[49] -0.63843072 0.27863705 -1.06624065 -0.18693361 2526.1883 1.0011626

D_true[50] 0.86124113 0.59606065 -0.07979191 1.76178105 1617.5362 1.0017955

a -0.05446510 0.09751551 -0.20976111 0.10457820 1386.6334 1.0004440

bA -0.61351468 0.16049184 -0.87111548 -0.35784110 900.5241 1.0025891

bM 0.05598574 0.16706251 -0.21729289 0.30902512 873.6370 1.0018299

sigma 0.58590288 0.10778943 0.42688904 0.76552462 486.0034 1.0095561

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Informational Message: The current Metropolis proposal is about to be rejected because of the following issue:

Chain 2 Exception: normal_lpdf: Scale parameter is 0, but must be positive! (in '/tmp/RtmpjPjDJw/model-122086d758.stan', line 28, column 4 to column 34)

Chain 2 If this warning occurs sporadically, such as for highly constrained variable types like covariance matrices, then the sampler is fine,

Chain 2 but if this warning occurs often then your model may be either severely ill-conditioned or misspecified.

Chain 2

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Informational Message: The current Metropolis proposal is about to be rejected because of the following issue:

Chain 3 Exception: normal_lpdf: Scale parameter is 0, but must be positive! (in '/tmp/RtmpjPjDJw/model-122086d758.stan', line 28, column 4 to column 34)

Chain 3 If this warning occurs sporadically, such as for highly constrained variable types like covariance matrices, then the sampler is fine,

Chain 3 but if this warning occurs often then your model may be either severely ill-conditioned or misspecified.

Chain 3

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 finished in 0.4 seconds.

Chain 3 finished in 0.3 seconds.

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 finished in 0.4 seconds.

Chain 4 finished in 0.4 seconds.

All 4 chains finished successfully.

Mean chain execution time: 0.4 seconds.

Total execution time: 0.6 seconds.

mean sd 5.5% 94.5% n_eff Rhat4

D_true[1] 1.13980066 0.3672073 0.54473938 1.7421660 2020.261 0.9991496

D_true[2] 0.72989332 0.5503889 -0.14497435 1.6243327 2388.551 0.9985643

D_true[3] 0.41832269 0.3381948 -0.11368834 0.9595620 2588.476 0.9999522

D_true[4] 1.45304387 0.4546278 0.73780377 2.2073164 1917.647 1.0003916

D_true[5] -0.89750179 0.1241661 -1.09859765 -0.6993325 2744.301 1.0005105

D_true[6] 0.69134812 0.3979001 0.06562878 1.3267263 2694.820 0.9993062

D_true[7] -1.35943323 0.3459120 -1.92849000 -0.8064240 2797.510 0.9995818

D_true[8] -0.28779986 0.4916344 -1.10661110 0.4921008 2049.506 1.0009338

D_true[9] -1.77196219 0.6044402 -2.69894100 -0.7984069 1621.656 1.0006758

D_true[10] -0.62027647 0.1645052 -0.88511736 -0.3583054 3348.679 0.9988624

D_true[11] 0.77699272 0.2810548 0.32338724 1.2301131 2022.770 0.9989415

D_true[12] -0.46395623 0.4917993 -1.24678390 0.3100883 1947.360 0.9988687

D_true[13] 0.18466706 0.4984056 -0.60521819 0.9620114 1417.958 0.9987119

D_true[14] -0.85982422 0.2266755 -1.21400160 -0.4921531 2480.290 1.0002637

D_true[15] 0.54116474 0.2880502 0.09933228 1.0134607 3103.837 0.9999754

D_true[16] 0.28906162 0.3791991 -0.33203928 0.8832713 2187.039 0.9993098

D_true[17] 0.49396919 0.4189143 -0.17879638 1.1568778 2478.119 0.9986335

D_true[18] 1.24608606 0.3573026 0.66986521 1.8242623 2686.657 1.0001980

D_true[19] 0.42154247 0.3783306 -0.16917162 1.0305995 2987.535 1.0002947

D_true[20] 0.27708897 0.5574021 -0.57884490 1.2197770 1252.782 0.9987722

D_true[21] -0.53963402 0.3207615 -1.05170550 -0.0102739 3177.273 0.9993598

D_true[22] -1.10708126 0.2516425 -1.50025465 -0.6952419 2456.320 0.9991992

D_true[23] -0.29196223 0.2548001 -0.69192237 0.1285582 2615.308 0.9985269

D_true[24] -1.03161297 0.3008844 -1.52290475 -0.5511805 2632.526 0.9985800

D_true[25] 0.41285163 0.4124108 -0.22655639 1.0666809 3259.927 0.9983697

D_true[26] -0.04792652 0.3034968 -0.53220768 0.4272377 2364.137 0.9996221

D_true[27] -0.04623642 0.5140159 -0.85306116 0.7662948 2589.839 0.9988235

D_true[28] -0.16844509 0.3949712 -0.84551703 0.4571312 1849.513 1.0019656

D_true[29] -0.28993034 0.5012341 -1.06958630 0.5291909 2292.606 0.9985251

D_true[30] -1.80438402 0.2319326 -2.16829345 -1.4303780 1944.205 1.0026937

⋮ ⋮ ⋮ ⋮ ⋮ ⋮ ⋮

M_true[25] -0.152868519 0.36721297 -0.74386089 0.42564432 3721.3290 0.9990446

M_true[26] -0.378903023 0.20604708 -0.70358575 -0.05383121 3270.8281 0.9989264

M_true[27] -0.320480175 0.49159103 -1.10519135 0.47542391 2717.3530 1.0002243

M_true[28] -0.139549026 0.34161527 -0.67233812 0.40379155 2575.1460 0.9993844

M_true[29] -0.718199643 0.42032015 -1.36067610 -0.04640796 3469.1455 0.9989717

M_true[30] -1.385296362 0.15555509 -1.62810960 -1.14155055 2945.3393 1.0006579

M_true[31] 0.064082656 0.43066441 -0.62428938 0.74098219 2936.6891 0.9992723

M_true[32] -0.860433145 0.12348629 -1.05343770 -0.66506229 2970.6106 0.9998388

M_true[33] 0.071180967 0.24761218 -0.31449523 0.47968621 2977.6313 0.9992579

M_true[34] 0.965363880 0.62923413 -0.02728858 1.98761395 2560.6416 0.9983876

M_true[35] -0.826105916 0.15538014 -1.06481480 -0.57270177 3231.9187 0.9998117

M_true[36] 0.888540910 0.32103142 0.39403025 1.41934490 2522.7284 0.9992918

M_true[37] -0.282542098 0.28316057 -0.73776220 0.16931516 2917.1523 1.0009205

M_true[38] -1.196606841 0.12610830 -1.39632930 -0.98794782 2274.0078 0.9994018

M_true[39] -1.006728396 0.50639594 -1.81955690 -0.19055231 2300.9962 1.0021731

M_true[40] -0.507620174 0.29019788 -0.96763578 -0.03805253 2848.2815 1.0002209

M_true[41] 0.003610646 0.57901820 -0.88233998 0.93984612 2575.4191 0.9998823

M_true[42] -0.168825699 0.23540747 -0.54638417 0.22476800 3068.1449 0.9990647

M_true[43] 0.354329161 0.16036737 0.09608789 0.60301057 3339.1457 0.9991328

M_true[44] 1.968909632 0.42897395 1.28348095 2.66120435 2090.2837 0.9992804

M_true[45] -0.666842085 0.52079232 -1.50119905 0.15627512 2788.2095 0.9989813

M_true[46] 0.083925491 0.21217465 -0.24694421 0.42397530 2487.9784 0.9991141

M_true[47] 0.317316058 0.25239524 -0.08739298 0.71246712 2623.8162 0.9998099

M_true[48] 0.474560523 0.41411576 -0.19063167 1.14342125 2243.7097 0.9988102

M_true[49] -0.740652101 0.20116619 -1.06708950 -0.42189996 2876.6849 0.9992264

M_true[50] 1.284739162 0.71606319 0.16746741 2.44926415 2683.6225 0.9987561

a -0.042854779 0.09496423 -0.19077060 0.11008004 1648.8423 0.9996295

bA -0.544299049 0.16209833 -0.81209181 -0.29511154 996.5373 1.0028343

bM 0.189021324 0.20999733 -0.16315066 0.51688197 695.7240 1.0021214

sigma 0.565245555 0.10878059 0.40696218 0.74226751 562.8355 1.0015088

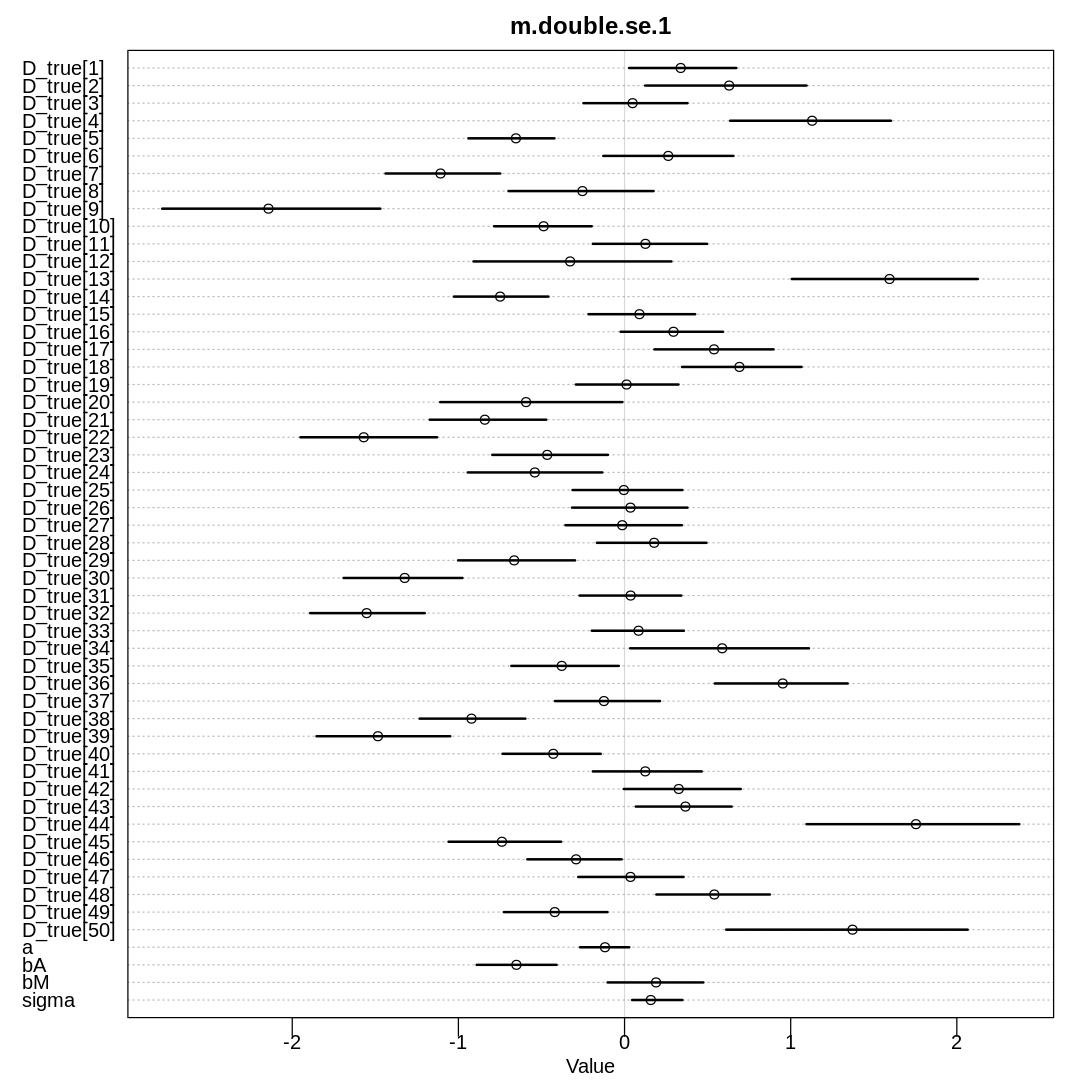

Doubling the standard error:

d$doubleSE = 2*d$Divorce.SE

dlist <- list(

D_obs = standardize(d$Divorce),

D_sd = d$doubleSE / sd(d$Divorce),

M = standardize(d$Marriage),

A = standardize(d$MedianAgeMarriage),

N = nrow(d)

)

m.double.se.1 <- ulam(

alist(

D_obs ~ dnorm(D_true, D_sd),

vector[N]:D_true ~ dnorm(mu, sigma),

mu <- a + bA * A + bM * M,

a ~ dnorm(0, 0.2),

bA ~ dnorm(0, 0.5),

bM ~ dnorm(0, 0.5),

sigma ~ dexp(1)

),

cmdstan = TRUE, data = dlist, chains = 4, cores = 4

)

display(precis(m.double.se.1, depth=3), mimetypes="text/plain")

iplot(function() {

plot(precis(m.double.se.1, depth=3), main="m.double.se.1")

}, ar=1)

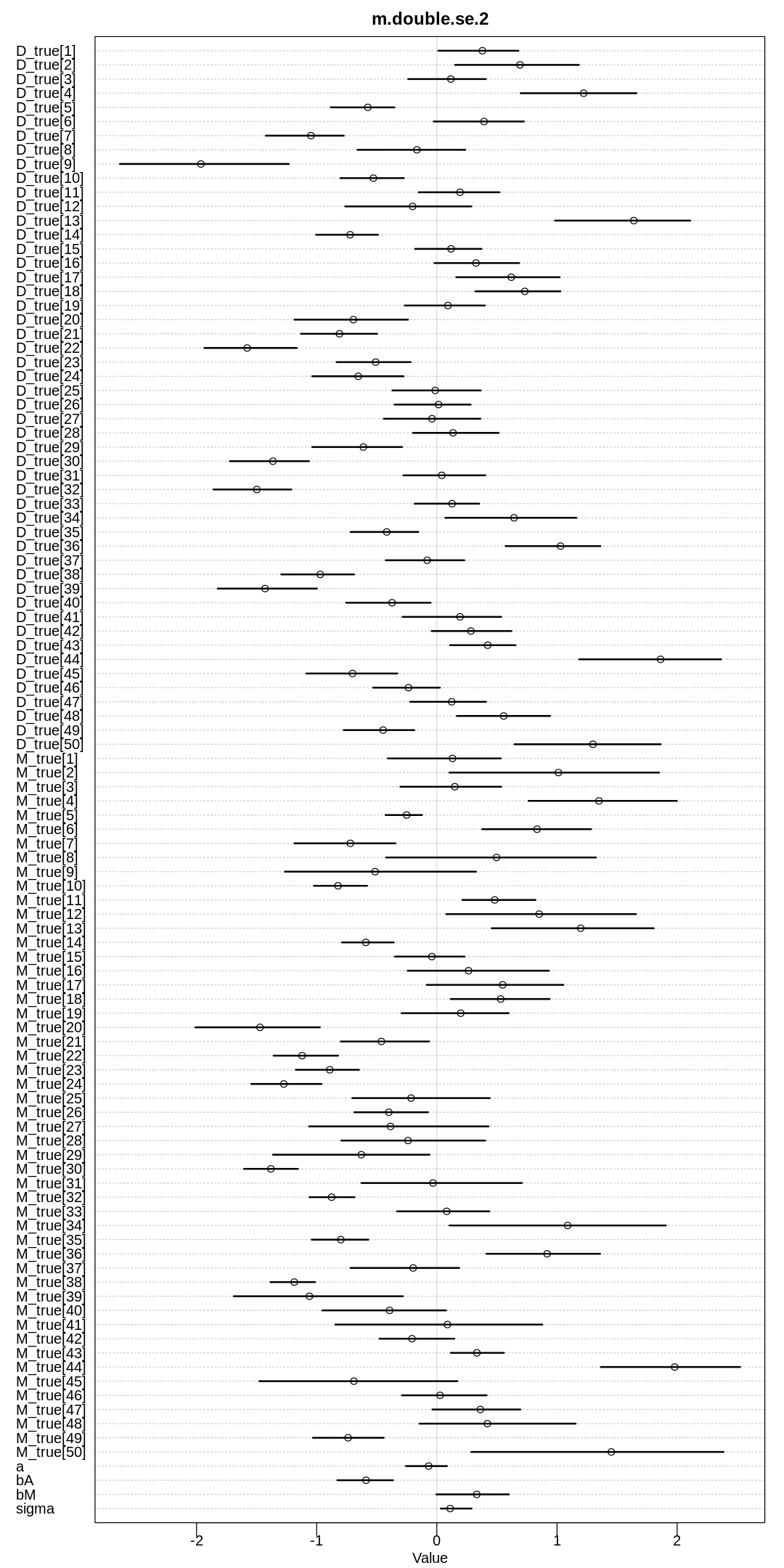

dlist <- list(

D_obs = standardize(d$Divorce),

D_sd = d$doubleSE / sd(d$Divorce),

M_obs = standardize(d$Marriage),

M_sd = d$Marriage.SE / sd(d$Marriage),

A = standardize(d$MedianAgeMarriage),

N = nrow(d)

)

m.double.se.2 <- ulam(

alist(

D_obs ~ dnorm(D_true, D_sd),

vector[N]:D_true ~ dnorm(mu, sigma),

mu <- a + bA * A + bM * M_true[i],

M_obs ~ dnorm(M_true, M_sd),

vector[N]:M_true ~ dnorm(0, 1),

a ~ dnorm(0, 0.2),

bA ~ dnorm(0, 0.5),

bM ~ dnorm(0, 0.5),

sigma ~ dexp(1)

),

cmdstan = TRUE, data = dlist, chains = 4, cores = 4

)

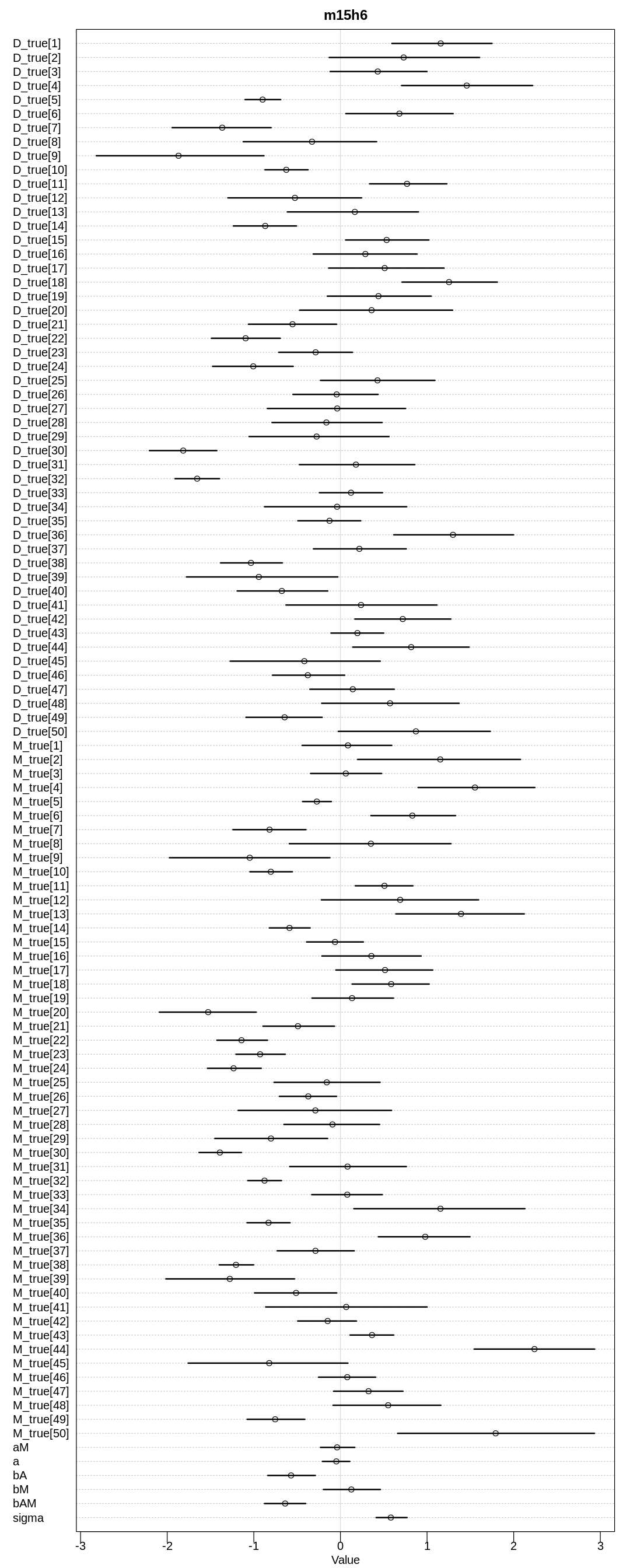

display(precis(m.double.se.2, depth=3), mimetypes="text/plain")

iplot(function() {

plot(precis(m.double.se.2, depth=3), main="m.double.se.2")

}, ar=0.5)

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 finished in 0.4 seconds.

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 finished in 0.7 seconds.

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 1 finished in 0.8 seconds.

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 finished in 2.2 seconds.

All 4 chains finished successfully.

Mean chain execution time: 1.0 seconds.

Total execution time: 2.2 seconds.

Warning: 26 of 2000 (1.0%) transitions ended with a divergence.

This may indicate insufficient exploration of the posterior distribution.

Possible remedies include:

* Increasing adapt_delta closer to 1 (default is 0.8)

* Reparameterizing the model (e.g. using a non-centered parameterization)

* Using informative or weakly informative prior distributions

22 of 2000 (1.0%) transitions hit the maximum treedepth limit of 10 or 2^10-1 leapfrog steps.

Trajectories that are prematurely terminated due to this limit will result in slow exploration.

Increasing the max_treedepth limit can avoid this at the expense of more computation.

If increasing max_treedepth does not remove warnings, try to reparameterize the model.

mean sd 5.5% 94.5% n_eff Rhat4

D_true[1] 0.33796572 0.21582894 0.027589873 0.67252494 236.76301 1.014180

D_true[2] 0.62947793 0.31168698 0.124353785 1.09543480 220.40308 1.020648

D_true[3] 0.04841299 0.20678361 -0.247430415 0.37882753 549.05169 1.002715

D_true[4] 1.12905704 0.31365612 0.635662415 1.60328915 214.67947 1.026316

D_true[5] -0.65377791 0.16034456 -0.939621315 -0.42165778 173.23045 1.023178

D_true[6] 0.26316311 0.24978849 -0.128048560 0.65538663 306.61900 1.009790

D_true[7] -1.10742594 0.21732411 -1.438743350 -0.74813529 430.64113 1.011535

D_true[8] -0.25339828 0.28199675 -0.698303350 0.17495354 255.05753 1.013457

D_true[9] -2.14312320 0.41295933 -2.782816350 -1.46995160 206.28805 1.019418

D_true[10] -0.48705608 0.18567907 -0.785085160 -0.19763261 465.86484 1.008968

D_true[11] 0.12557759 0.22536052 -0.190852545 0.49629107 233.26458 1.014086

D_true[12] -0.32751822 0.37311658 -0.908953875 0.28187891 229.07483 1.014311

D_true[13] 1.59482665 0.35063885 1.006406650 2.12598575 138.94207 1.027431

D_true[14] -0.74863071 0.18364053 -1.027192450 -0.45797249 725.91562 1.004536

D_true[15] 0.08982116 0.20895956 -0.217009440 0.42511147 323.31161 1.004783

D_true[16] 0.29448084 0.20254489 -0.023449970 0.59243579 359.42212 1.003181

D_true[17] 0.53855027 0.23803871 0.179682630 0.89699193 372.93201 1.005101

D_true[18] 0.69156478 0.23760357 0.345972585 1.06529955 219.43478 1.015470

D_true[19] 0.01196021 0.20513287 -0.292825305 0.32458850 481.09538 1.001699

D_true[20] -0.59295331 0.35022430 -1.109419600 -0.01348071 259.99886 1.012581

D_true[21] -0.84093494 0.22636292 -1.172218850 -0.47132749 410.37739 1.005227

D_true[22] -1.56966665 0.26253209 -1.950601650 -1.12831050 182.68226 1.022074

D_true[23] -0.46547881 0.22051994 -0.796227160 -0.10014746 368.72365 1.007733

D_true[24] -0.53996588 0.25540117 -0.943720300 -0.13405369 262.57847 1.020685

D_true[25] -0.00404805 0.20885334 -0.313141805 0.34816536 462.60509 1.001810

D_true[26] 0.03556651 0.21453105 -0.316112430 0.37854043 414.39580 1.003293

D_true[27] -0.01375848 0.22722340 -0.355973710 0.34481386 434.48602 1.001883

D_true[28] 0.17821194 0.21297761 -0.166225005 0.49309792 323.32638 1.006560

D_true[29] -0.66441592 0.23032608 -1.001813750 -0.29779869 483.74924 1.007189

D_true[30] -1.32330272 0.23572433 -1.690969800 -0.97576939 202.44262 1.034145

D_true[31] 0.03665304 0.20061424 -0.270965560 0.34164883 481.31775 1.002319

D_true[32] -1.55229859 0.21759792 -1.892036100 -1.20229905 228.69233 1.028532

D_true[33] 0.08431884 0.17853591 -0.197324755 0.35734198 468.68184 1.002113

D_true[34] 0.58767295 0.34871339 0.033809024 1.10981235 228.57250 1.019512

D_true[35] -0.37795638 0.20634101 -0.681413805 -0.03474520 312.20969 1.006037

D_true[36] 0.95217628 0.25611169 0.542974750 1.34295930 200.12218 1.018736

D_true[37] -0.12412461 0.20532204 -0.418619655 0.21359914 480.65174 1.000627

D_true[38] -0.92107028 0.19898630 -1.233116650 -0.59688532 313.20667 1.019827

D_true[39] -1.48428354 0.26331386 -1.853833450 -1.04920445 286.03251 1.019377

D_true[40] -0.42893058 0.19022471 -0.734642685 -0.14324799 706.00854 1.004737

D_true[41] 0.12493998 0.21404886 -0.190648675 0.46486038 438.52776 1.004675

D_true[42] 0.32613166 0.22839459 -0.004690787 0.69938573 275.37900 1.006487

D_true[43] 0.36641722 0.18829718 0.068024864 0.64484670 193.80211 1.015689

D_true[44] 1.75382435 0.40725423 1.095382350 2.37603800 146.49939 1.032014

D_true[45] -0.73812669 0.23060977 -1.058904000 -0.38183290 409.10015 1.009005

D_true[46] -0.29157763 0.19121975 -0.585240370 -0.01712644 542.94456 1.001173

D_true[47] 0.03571322 0.20550448 -0.278653425 0.35532445 411.69635 1.003303

D_true[48] 0.54032926 0.22679137 0.191324580 0.87468402 285.35007 1.009658

D_true[49] -0.42001603 0.20432710 -0.726107710 -0.10278328 490.55769 1.008375

D_true[50] 1.37216976 0.46116025 0.611850845 2.06541330 173.19466 1.030323

a -0.11762847 0.09254747 -0.268001550 0.02835270 154.73145 1.002047

bA -0.65083652 0.15185027 -0.889979940 -0.40885810 163.46987 1.019597

bM 0.18958345 0.18231647 -0.102041000 0.47425205 187.29157 1.021093

sigma 0.15797996 0.09803259 0.045619303 0.34843333 40.21924 1.110314

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Informational Message: The current Metropolis proposal is about to be rejected because of the following issue:

Chain 1 Exception: normal_lpdf: Scale parameter is 0, but must be positive! (in '/tmp/RtmpjPjDJw/model-12fa46c90.stan', line 28, column 4 to column 34)

Chain 1 If this warning occurs sporadically, such as for highly constrained variable types like covariance matrices, then the sampler is fine,

Chain 1 but if this warning occurs often then your model may be either severely ill-conditioned or misspecified.

Chain 1

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Informational Message: The current Metropolis proposal is about to be rejected because of the following issue:

Chain 4 Exception: normal_lpdf: Scale parameter is 0, but must be positive! (in '/tmp/RtmpjPjDJw/model-12fa46c90.stan', line 28, column 4 to column 34)

Chain 4 If this warning occurs sporadically, such as for highly constrained variable types like covariance matrices, then the sampler is fine,

Chain 4 but if this warning occurs often then your model may be either severely ill-conditioned or misspecified.

Chain 4

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 finished in 0.7 seconds.

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 3 finished in 1.3 seconds.

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 finished in 1.9 seconds.

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 finished in 2.8 seconds.

All 4 chains finished successfully.

Mean chain execution time: 1.7 seconds.

Total execution time: 2.9 seconds.

Warning: 445 of 2000 (22.0%) transitions ended with a divergence.

This may indicate insufficient exploration of the posterior distribution.

Possible remedies include:

* Increasing adapt_delta closer to 1 (default is 0.8)

* Reparameterizing the model (e.g. using a non-centered parameterization)

* Using informative or weakly informative prior distributions

mean sd 5.5% 94.5% n_eff Rhat4

D_true[1] 0.37953293 0.2398603 0.01237775 0.6787418 9.805153 1.146924

D_true[2] 0.69196627 0.3523082 0.15158532 1.1824500 12.469865 1.123998

D_true[3] 0.11663164 0.2254270 -0.23885513 0.4072780 6.653921 1.230899

D_true[4] 1.22158311 0.3119979 0.69807085 1.6619734 66.510479 1.067652

D_true[5] -0.57465848 0.1764928 -0.88366910 -0.3530077 7.000977 1.195604

D_true[6] 0.39357630 0.2683339 -0.02626940 0.7254170 13.364836 1.138957

D_true[7] -1.04783714 0.2253261 -1.42403550 -0.7743593 12.841747 1.116780

D_true[8] -0.16575146 0.3102746 -0.66099346 0.2364567 12.702190 1.116254

D_true[9] -1.96373960 0.4200831 -2.63845265 -1.2318186 400.513116 1.013574

D_true[10] -0.52815005 0.1677250 -0.80202447 -0.2736530 390.916477 1.010018

D_true[11] 0.19327236 0.2187164 -0.15183088 0.5218317 200.169074 1.036598

D_true[12] -0.20145125 0.3498259 -0.76296994 0.2873224 16.480917 1.109628

D_true[13] 1.63929540 0.3871895 0.98354415 2.1092200 7.252962 1.209580

D_true[14] -0.72190047 0.1711595 -1.00516870 -0.4894583 19.605170 1.087852

D_true[15] 0.11847032 0.1899755 -0.18137113 0.3714890 12.804508 1.141989

D_true[16] 0.32601672 0.2262484 -0.02023291 0.6848860 88.587983 1.037856

D_true[17] 0.61935379 0.2875835 0.16176984 1.0222969 5.665619 1.287454

D_true[18] 0.73238124 0.2407032 0.32078948 1.0285387 15.077739 1.106224

D_true[19] 0.09252788 0.2342369 -0.26708202 0.3998111 7.494055 1.211244

D_true[20] -0.69459447 0.2860700 -1.18549245 -0.2400368 264.378090 1.014034

D_true[21] -0.81075193 0.2038724 -1.13111530 -0.4978065 408.929931 1.016963

D_true[22] -1.57889329 0.2371380 -1.93478115 -1.1654505 421.561441 1.016519

D_true[23] -0.50900328 0.1986487 -0.83406531 -0.2181990 133.318558 1.035616

D_true[24] -0.65350689 0.2366713 -1.03768115 -0.2767566 309.108215 1.015143

D_true[25] -0.01350890 0.2223810 -0.37072281 0.3659846 483.597192 1.012460

D_true[26] 0.01433202 0.2046745 -0.35245535 0.2819965 166.364248 1.033950

D_true[27] -0.04005472 0.2447914 -0.44188560 0.3629682 570.376649 1.009484

D_true[28] 0.13487870 0.2242061 -0.19848964 0.5155723 353.172665 1.010019

D_true[29] -0.61159603 0.2547695 -1.03730385 -0.2895010 11.250510 1.138764

D_true[30] -1.36428192 0.2051555 -1.72247665 -1.0638615 591.624331 1.004585

⋮ ⋮ ⋮ ⋮ ⋮ ⋮ ⋮

M_true[25] -0.21450375 0.37080789 -0.704379665 0.44097338 67.650780 1.052598

M_true[26] -0.39950867 0.19107744 -0.686060750 -0.07300721 246.048323 1.018746

M_true[27] -0.38521611 0.48143315 -1.063819300 0.43026703 39.630029 1.060365

M_true[28] -0.23981025 0.39151857 -0.795504935 0.40261822 8.980552 1.149880

M_true[29] -0.62854469 0.44034875 -1.364395950 -0.06096430 11.298713 1.130044

M_true[30] -1.38141541 0.13872286 -1.605390000 -1.15659475 913.607736 1.011502

M_true[31] -0.03150485 0.43527563 -0.626935000 0.70815564 15.153782 1.093691

M_true[32] -0.87557438 0.11473507 -1.060731000 -0.68501714 833.805459 1.009311

M_true[33] 0.08227647 0.23253696 -0.332239370 0.43675595 641.999055 1.007397

M_true[34] 1.08883925 0.57306156 0.104921880 1.90639830 48.956544 1.054365

M_true[35] -0.79911565 0.14882447 -1.041555950 -0.56968936 287.250840 1.008154

M_true[36] 0.91791597 0.29856559 0.411716810 1.35920220 400.275892 1.014239

M_true[37] -0.19707880 0.30266146 -0.717625440 0.18640400 7.613847 1.173246

M_true[38] -1.18629699 0.11594019 -1.384891150 -1.01389470 187.089798 1.025461

M_true[39] -1.06061164 0.43551755 -1.690460850 -0.28232032 179.154085 1.020598

M_true[40] -0.39334273 0.34190572 -0.952861170 0.07671980 7.042950 1.199141

M_true[41] 0.08824213 0.54307125 -0.844324385 0.87879552 54.645211 1.040951

M_true[42] -0.20635177 0.21146470 -0.477607215 0.14519977 15.547123 1.089552

M_true[43] 0.33338974 0.13822960 0.115647790 0.55802736 2154.259473 1.000542

M_true[44] 1.97973930 0.36420964 1.364875800 2.52349315 623.179581 1.004525

M_true[45] -0.68998058 0.50541114 -1.477439800 0.17077350 1107.339486 1.004155

M_true[46] 0.02659864 0.23250230 -0.290543000 0.41404550 8.907110 1.196804

M_true[47] 0.36215763 0.23708300 -0.037589007 0.69513531 55.666390 1.047142

M_true[48] 0.42050286 0.40579333 -0.144471320 1.15544260 62.716890 1.041190

M_true[49] -0.73834201 0.18019941 -1.031957950 -0.44189984 900.957758 1.004596

M_true[50] 1.45234177 0.66575837 0.286931630 2.38665805 52.409417 1.054564

a -0.06795557 0.11847845 -0.257483800 0.08447670 5.230863 1.321037

bA -0.58923142 0.13619825 -0.828265435 -0.36454046 312.111245 1.020398

bM 0.33241402 0.18764196 -0.003598285 0.59993062 68.101948 1.071576

sigma 0.11124654 0.09173301 0.033021518 0.28845468 17.889911 1.152191

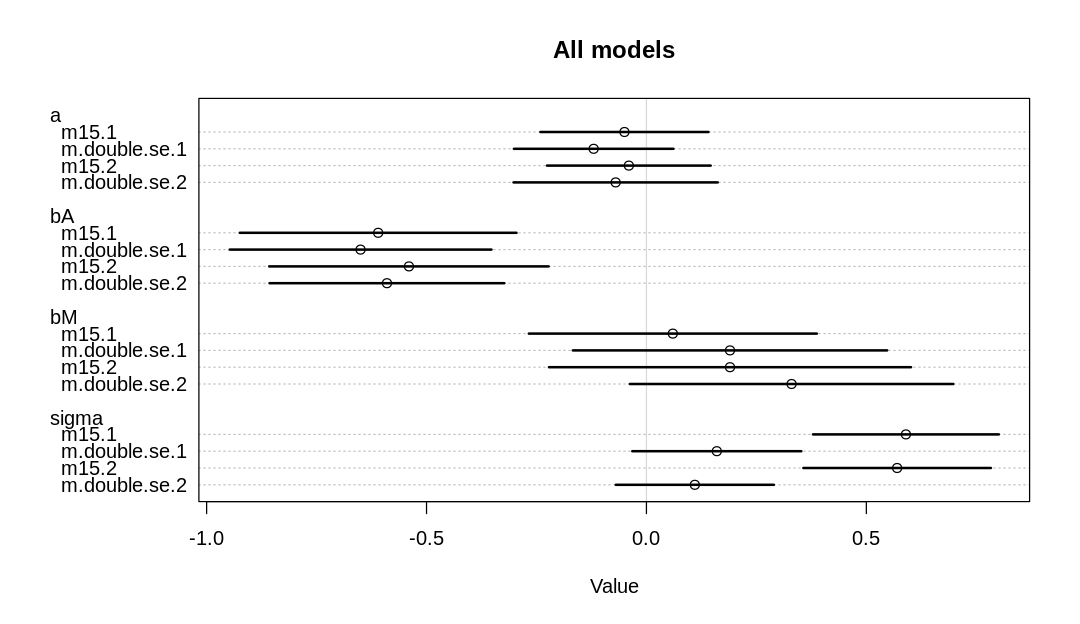

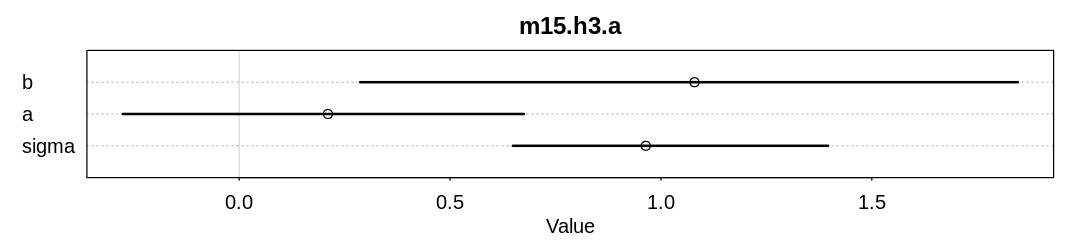

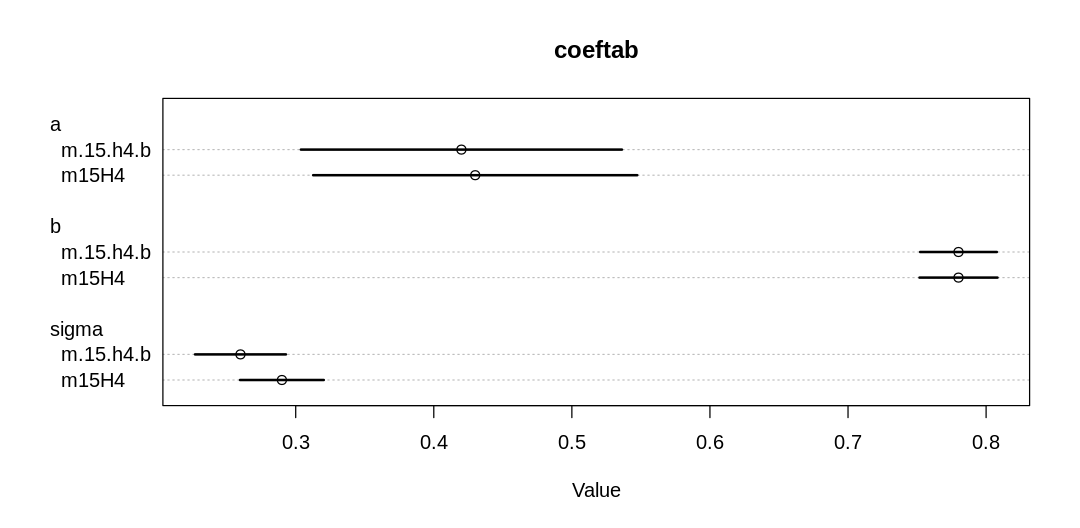

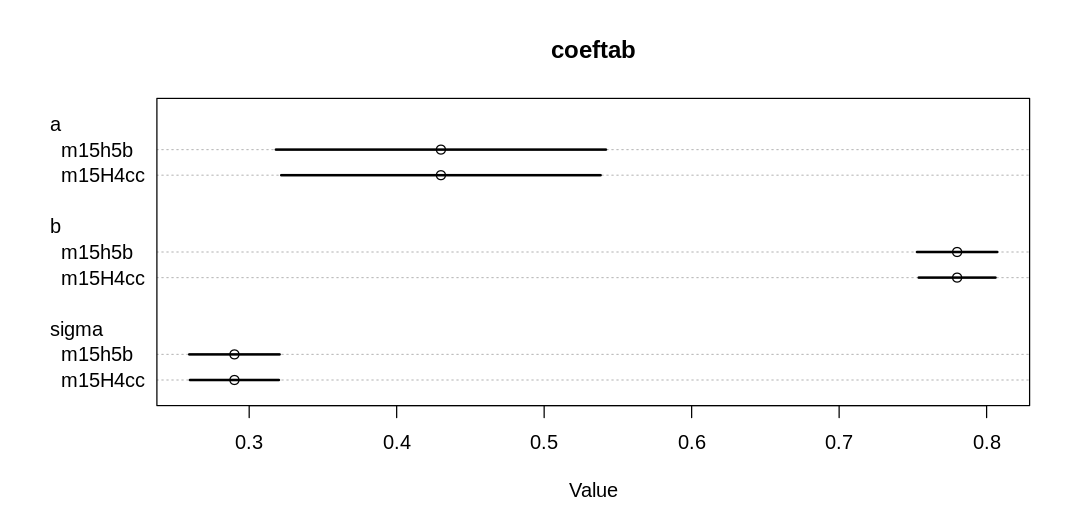

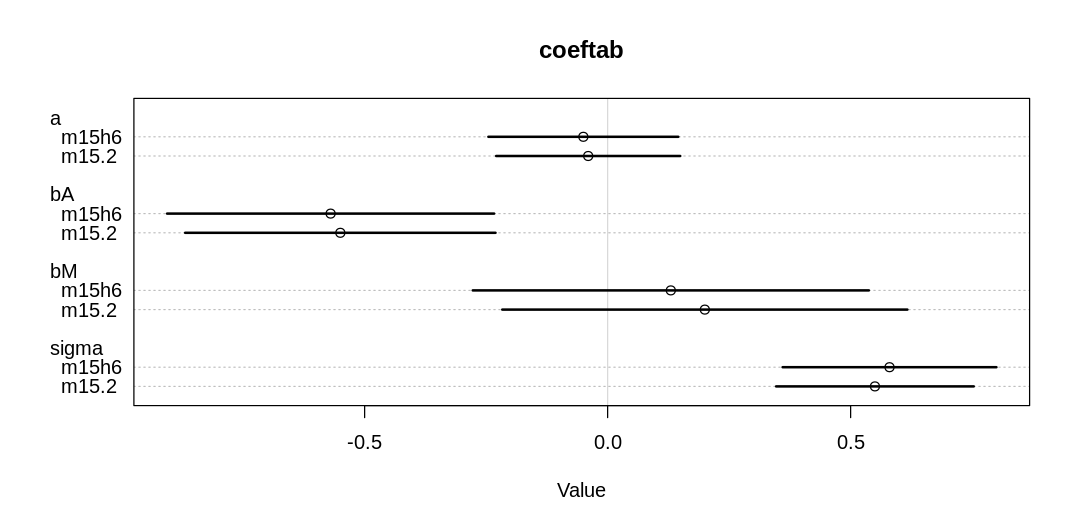

Comparing the most important parameters:

iplot(function() {

plot(

coeftab(m.double.se.2, m15.2, m.double.se.1, m15.1), pars=c("a", "bA", "bM", "sigma"),

main="All models"

)

}, ar=1.7)

Notice we are getting some divergent transitions. It’s likely we’re running into these issues because there is more parameter space to explore; we’re less certain about all our inferences and so we need to cover more possibilities. Like vague priors, this can lead to divergent transitions.

The a, bA, and bM parameters have increased. With less certainty in the measurements, it’s

more plausible that a stronger relationship holds (similar to what we saw in Figure 15.3). In

contrast, the sigma parameter has decreased, because we can now explain more variation in terms of

measurement error than general noise.

We also see, in general, more uncertainty in parameter estimates across the board (wider HDPI bars).

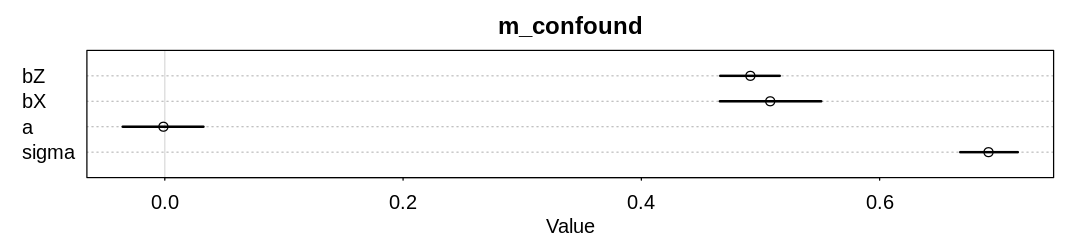

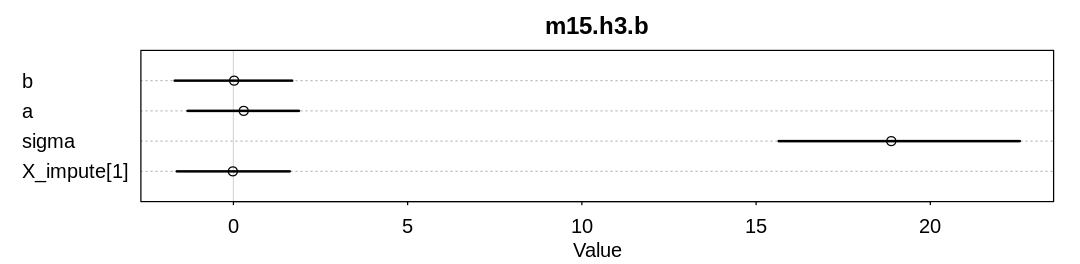

15M4. Simulate data from this DAG: \(X \rightarrow Y \rightarrow Z\). Now fit a model that predicts \(Y\) using both \(X\) and \(Z\). What kind of confound arises, in terms of inferring the causal influence of \(X\) on \(Y\)?

Answer. First lets simulate, with normal distributions:

N <- 1000

X <- rnorm(N)

Y <- rnorm(N, mean=X)

Z <- rnorm(N, mean=Y)

dat_list <- list(

X = X,

Y = Y,

Z = Z

)

m_confound <- ulam(

alist(

Y ~ dnorm(mu, sigma),

mu <- a + bX*X + bZ*Z,

c(a, bX, bZ) ~ dnorm(0, 1),

sigma ~ dexp(1)

), cmdstan = TRUE, data=dat_list, chains=4, cores=4

)

display(precis(m_confound, depth=3), mimetypes="text/plain")

iplot(function() {

plot(precis(m_confound, depth=3), main="m_confound")

}, ar=4.5)

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Informational Message: The current Metropolis proposal is about to be rejected because of the following issue:

Chain 1 Exception: normal_lpdf: Scale parameter is 0, but must be positive! (in '/tmp/RtmpjPjDJw/model-122e9e7fe8.stan', line 21, column 4 to column 29)

Chain 1 If this warning occurs sporadically, such as for highly constrained variable types like covariance matrices, then the sampler is fine,

Chain 1 but if this warning occurs often then your model may be either severely ill-conditioned or misspecified.

Chain 1

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 finished in 0.8 seconds.

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 finished in 0.8 seconds.

Chain 3 finished in 0.9 seconds.

Chain 4 finished in 0.8 seconds.

All 4 chains finished successfully.

Mean chain execution time: 0.8 seconds.

Total execution time: 0.9 seconds.

mean sd 5.5% 94.5% n_eff Rhat4

bZ 0.4915055 0.01581945 0.46620708 0.51615314 1370.006 1.001106

bX 0.5081546 0.02644927 0.46587723 0.55098527 1230.789 1.002709

a -0.0011672 0.02145382 -0.03532943 0.03244627 1503.938 1.001742

sigma 0.6913835 0.01513483 0.66766894 0.71596408 1687.319 1.001510

The confound we’re running into is post-treatment bias (conditioning on the outcome).

In this case \(Z\) is a better predictor of \(Y\) than \(X\) (notice the credible intervals). This is because the function between \(X\) and \(Y\) is the same as the function between \(Y\) and \(Z\), specifically the normal distribution with standard deviation equal one. Since \(Y\) will take on more dispersed values than \(X\), and \(Z\) more dispersed values than \(Y\), the more widely dispersed values of \(Z\) will predict \(Y\) better.

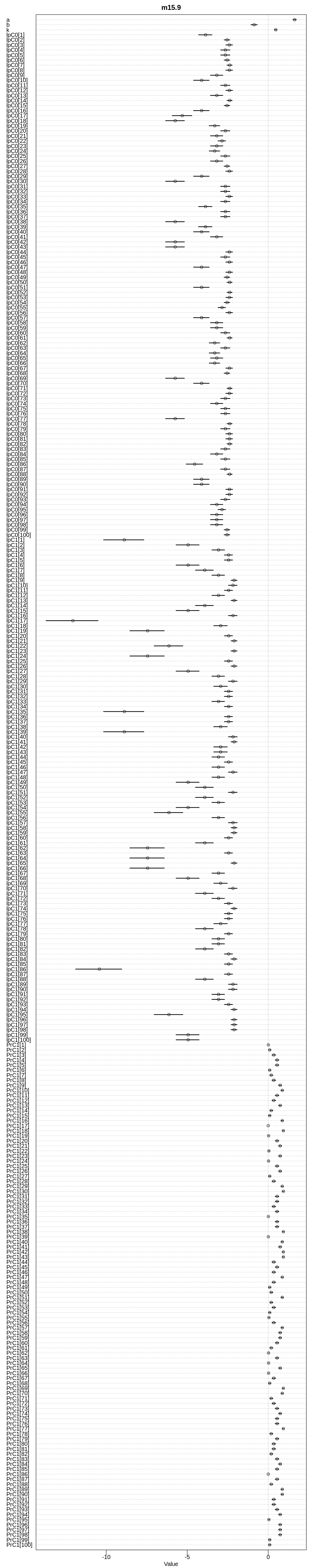

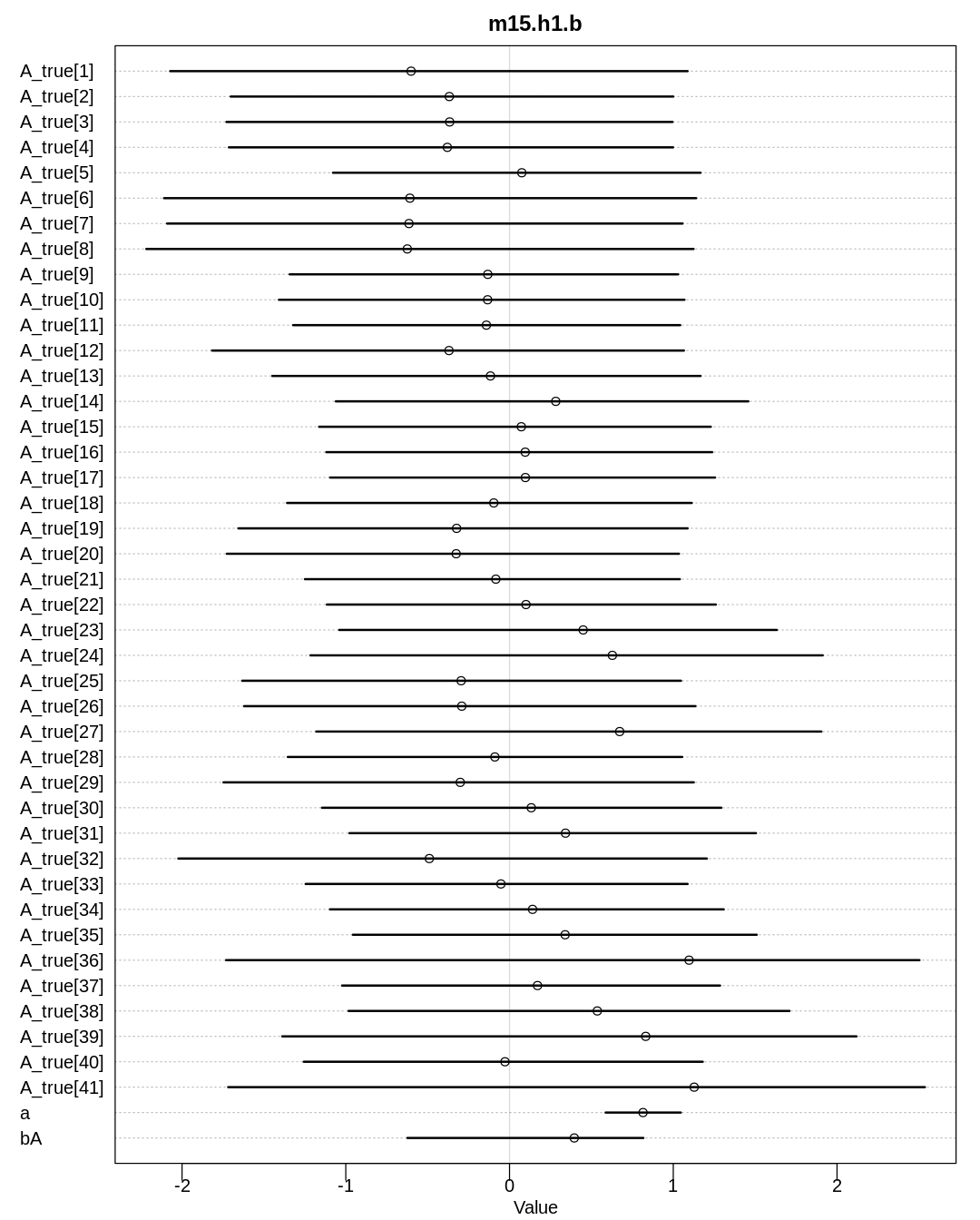

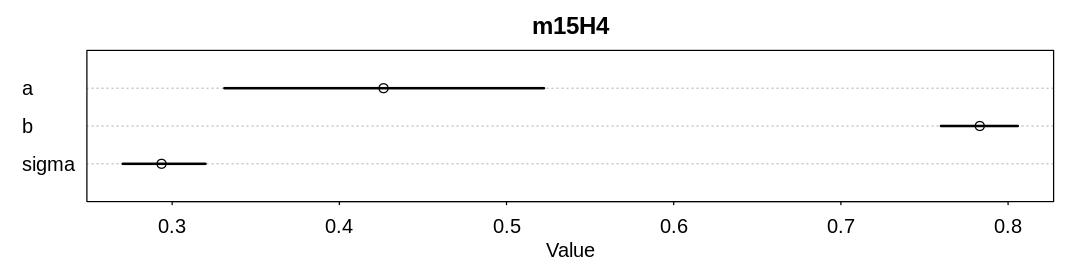

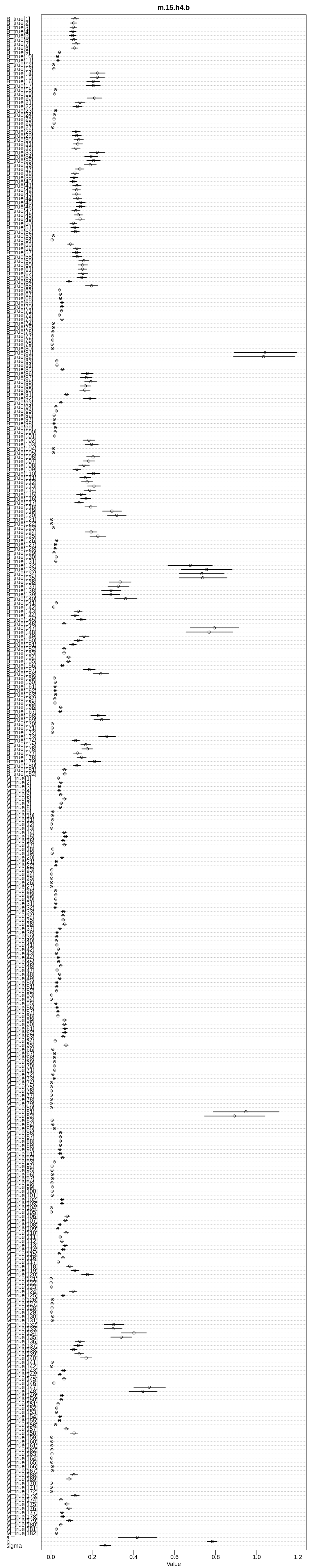

15M5. Return to the singing bird model, m15.9, and compare the posterior estimates of cat

presence (PrC1) to the true simulated values. How good is the model at inferring the missing data?

Can you think of a way to change the simulation so that the precision of the inference is stronger?

set.seed(9)

N_houses <- 100L

alpha <- 5

beta <- (-3)

k <- 0.5

r <- 0.2

cat <- rbern(N_houses, k)

notes <- rpois(N_houses, alpha + beta * cat)

R_C <- rbern(N_houses, r)

cat_obs <- cat

cat_obs[R_C == 1] <- (-9L)

dat <- list(

notes = notes,

cat = cat_obs,

RC = R_C,

N = as.integer(N_houses)

)

m15.9 <- ulam(

alist(

# singing bird model

notes | RC == 0 ~ poisson(lambda),

notes | RC == 1 ~ custom(log_sum_exp(

log(k) + poisson_lpmf(notes | exp(a + b)),

log(1 - k) + poisson_lpmf(notes | exp(a))

)),

log(lambda) <- a + b * cat,

a ~ normal(5, 3),

b ~ normal(-3, 2),

# sneaking cat model

cat | RC == 0 ~ bernoulli(k),

k ~ beta(2, 2),

# imputed values

gq > vector[N]:PrC1 <- exp(lpC1) / (exp(lpC1) + exp(lpC0)),

gq > vector[N]:lpC1 <- log(k) + poisson_lpmf(notes[i] | exp(a + b)),

gq > vector[N]:lpC0 <- log(1 - k) + poisson_lpmf(notes[i] | exp(a))

),

cmdstan = TRUE, data = dat, chains = 4, cores = 4

)

display(precis(m15.9, depth=3), mimetypes="text/plain")

iplot(function() {

plot(precis(m15.9, depth=3), main="m15.9")

}, ar=0.2)

Warning in '/tmp/RtmpjPjDJw/model-122e748371.stan', line 3, column 4: Declaration

of arrays by placing brackets after a variable name is deprecated and

will be removed in Stan 2.32.0. Instead use the array keyword before the

type. This can be changed automatically using the auto-format flag to

stanc

Warning in '/tmp/RtmpjPjDJw/model-122e748371.stan', line 4, column 4: Declaration

of arrays by placing brackets after a variable name is deprecated and

will be removed in Stan 2.32.0. Instead use the array keyword before the

type. This can be changed automatically using the auto-format flag to

stanc

Warning in '/tmp/RtmpjPjDJw/model-122e748371.stan', line 5, column 4: Declaration

of arrays by placing brackets after a variable name is deprecated and

will be removed in Stan 2.32.0. Instead use the array keyword before the

type. This can be changed automatically using the auto-format flag to

stanc

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 finished in 0.4 seconds.

Chain 2 finished in 0.4 seconds.

Chain 3 finished in 0.4 seconds.

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 finished in 0.5 seconds.

All 4 chains finished successfully.

Mean chain execution time: 0.4 seconds.

Total execution time: 0.6 seconds.

mean sd 5.5% 94.5% n_eff Rhat4

a 1.6305739 0.06487360 1.5270624 1.7341393 1246.262 1.0007234

b -0.8686414 0.12404909 -1.0655309 -0.6745582 1370.308 1.0002001

k 0.4613826 0.05293322 0.3792623 0.5478094 1578.813 0.9998864

lpC0[1] -3.8678825 0.25926699 -4.2997987 -3.4731018 1307.226 1.0008298

lpC0[2] -2.5370281 0.10940782 -2.7196871 -2.3717162 1604.795 1.0003821

lpC0[3] -2.3969789 0.13123678 -2.6149412 -2.2036879 1472.008 1.0000513

lpC0[4] -2.6412583 0.18020927 -2.9358888 -2.3658676 1357.050 1.0002515

lpC0[5] -2.6412583 0.18020927 -2.9358888 -2.3658676 1357.050 1.0002515

lpC0[6] -2.5370281 0.10940782 -2.7196871 -2.3717162 1604.795 1.0003821

lpC0[7] -2.3758426 0.10192148 -2.5441358 -2.2288279 1564.462 0.9999698

lpC0[8] -2.3969789 0.13123678 -2.6149412 -2.2036879 1472.008 1.0000513

lpC0[9] -3.1732201 0.23694897 -3.5641388 -2.8074180 1306.062 1.0003836

lpC0[10] -4.1106469 0.29703792 -4.6066845 -3.6474712 1282.459 1.0004647

lpC0[11] -2.6412583 0.18020927 -2.9358888 -2.3658676 1357.050 1.0002515

lpC0[12] -2.3969789 0.13123678 -2.6149412 -2.2036879 1472.008 1.0000513

lpC0[13] -3.1732201 0.23694897 -3.5641388 -2.8074180 1306.062 1.0003836

lpC0[14] -2.3758426 0.10192148 -2.5441358 -2.2288279 1564.462 0.9999698

lpC0[15] -2.5370281 0.10940782 -2.7196871 -2.3717162 1604.795 1.0003821

lpC0[16] -4.1106469 0.29703792 -4.6066845 -3.6474712 1282.459 1.0004647

lpC0[17] -5.3072147 0.38228011 -5.9249241 -4.7161928 1275.016 1.0008237

lpC0[18] -5.7412210 0.35879703 -6.3358815 -5.1745468 1270.034 1.0005170

lpC0[19] -3.3012319 0.20096205 -3.6413410 -2.9936611 1348.218 1.0008078

lpC0[20] -2.6412583 0.18020927 -2.9358888 -2.3658676 1357.050 1.0002515

lpC0[21] -3.1732201 0.23694897 -3.5641388 -2.8074180 1306.062 1.0003836

lpC0[22] -2.8523643 0.14822054 -3.0976313 -2.6325850 1441.347 1.0007080

lpC0[23] -3.1732201 0.23694897 -3.5641388 -2.8074180 1306.062 1.0003836

lpC0[24] -3.3012319 0.20096205 -3.6413410 -2.9936611 1348.218 1.0008078

lpC0[25] -2.6412583 0.18020927 -2.9358888 -2.3658676 1357.050 1.0002515

lpC0[26] -3.1732201 0.23694897 -3.5641388 -2.8074180 1306.062 1.0003836

lpC0[27] -2.5370281 0.10940782 -2.7196871 -2.3717162 1604.795 1.0003821

⋮ ⋮ ⋮ ⋮ ⋮ ⋮ ⋮

PrC1[71] 0.184080542 0.057826721 0.1004002350 0.28357880 1680.333 0.9999298

PrC1[72] 0.342496963 0.071722656 0.2327824950 0.45816177 1658.033 0.9997594

PrC1[73] 0.548759528 0.071358327 0.4348512500 0.65723994 1568.274 0.9998947

PrC1[74] 0.739902231 0.058983217 0.6397687550 0.82782831 1374.728 1.0002978

PrC1[75] 0.548759528 0.071358327 0.4348512500 0.65723994 1568.274 0.9998947

PrC1[76] 0.548759528 0.071358327 0.4348512500 0.65723994 1568.274 0.9998947

PrC1[77] 0.937878873 0.027476536 0.8864772500 0.97174892 1068.440 1.0006343

PrC1[78] 0.184080542 0.057826721 0.1004002350 0.28357880 1680.333 0.9999298

PrC1[79] 0.548759528 0.071358327 0.4348512500 0.65723994 1568.274 0.9998947

PrC1[80] 0.342496963 0.071722656 0.2327824950 0.45816177 1658.033 0.9997594

PrC1[81] 0.342496963 0.071722656 0.2327824950 0.45816177 1658.033 0.9997594

PrC1[82] 0.184080542 0.057826721 0.1004002350 0.28357880 1680.333 0.9999298

PrC1[83] 0.548759528 0.071358327 0.4348512500 0.65723994 1568.274 0.9998947

PrC1[84] 0.739902231 0.058983217 0.6397687550 0.82782831 1374.728 1.0002978

PrC1[85] 0.548759528 0.071358327 0.4348512500 0.65723994 1568.274 0.9998947

PrC1[86] 0.004186114 0.004196283 0.0006075278 0.01178349 1601.748 1.0012753

PrC1[87] 0.548759528 0.071358327 0.4348512500 0.65723994 1568.274 0.9998947

PrC1[88] 0.184080542 0.057826721 0.1004002350 0.28357880 1680.333 0.9999298

PrC1[89] 0.868392604 0.042487911 0.7907626650 0.92642500 1154.959 1.0005724

PrC1[90] 0.868392604 0.042487911 0.7907626650 0.92642500 1154.959 1.0005724

PrC1[91] 0.342496963 0.071722656 0.2327824950 0.45816177 1658.033 0.9997594

PrC1[92] 0.342496963 0.071722656 0.2327824950 0.45816177 1658.033 0.9997594

PrC1[93] 0.548759528 0.071358327 0.4348512500 0.65723994 1568.274 0.9998947

PrC1[94] 0.739902231 0.058983217 0.6397687550 0.82782831 1374.728 1.0002978

PrC1[95] 0.042253297 0.023729624 0.0141282785 0.08730985 1678.285 1.0005301

PrC1[96] 0.739902231 0.058983217 0.6397687550 0.82782831 1374.728 1.0002978

PrC1[97] 0.739902231 0.058983217 0.6397687550 0.82782831 1374.728 1.0002978

PrC1[98] 0.739902231 0.058983217 0.6397687550 0.82782831 1374.728 1.0002978

PrC1[99] 0.090158074 0.039075072 0.0389671790 0.16067187 1679.383 1.0002151

PrC1[100] 0.090158074 0.039075072 0.0389671790 0.16067187 1679.383 1.0002151

Indexes of the missing values:

display(which(R_C != 0))

post <- extract.samples(m15.9)

- 1

- 4

- 14

- 22

- 26

- 28

- 29

- 30

- 32

- 41

- 46

- 47

- 48

- 53

- 55

- 59

- 60

- 65

- 66

- 81

- 83

- 84

- 92

- 100

The mean values of PrC1 at those indexes:

mean_prc1 <- apply(post$PrC1, 2, mean)

display(mean_prc1[R_C != 0])

- 0.00900593709

- 0.5487595275

- 0.1840805422

- 0.04225329709

- 0.7399022305

- 0.3424969625

- 0.8683926035

- 0.9378788725

- 0.5487595275

- 0.7399022305

- 0.3424969625

- 0.8683926035

- 0.3424969625

- 0.3424969625

- 0.04225329709

- 0.7399022305

- 0.5487595275

- 0.7399022305

- 0.01950867139

- 0.3424969625

- 0.5487595275

- 0.7399022305

- 0.3424969625

- 0.0901580741

The true simulated values at those indexes:

display(cat[R_C != 0])

- 0

- 0

- 0

- 0

- 1

- 0

- 1

- 1

- 1

- 1

- 0

- 1

- 1

- 1

- 0

- 1

- 0

- 1

- 0

- 0

- 0

- 1

- 0

- 0

If we take PrC1 > 0.5 to mean a cat is present, then these missing elements were predicted

correctly:

cat_prc1 <- as.integer(mean_prc1 > 0.5)

is_correct <- cat_prc1[R_C != 0] == cat[R_C != 0]

display(is_correct)

- TRUE

- FALSE

- TRUE

- TRUE

- TRUE

- TRUE

- TRUE

- TRUE

- TRUE

- TRUE

- TRUE

- TRUE

- FALSE

- FALSE

- TRUE

- TRUE

- FALSE

- TRUE

- TRUE

- TRUE

- FALSE

- TRUE

- TRUE

- TRUE

For a total accuracy of:

display(sum(is_correct) / length(is_correct))

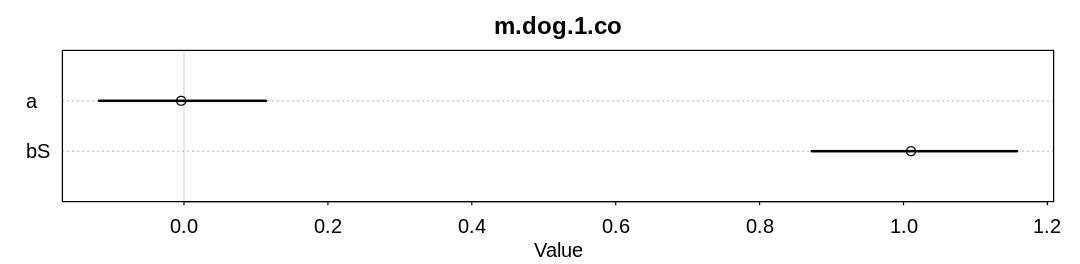

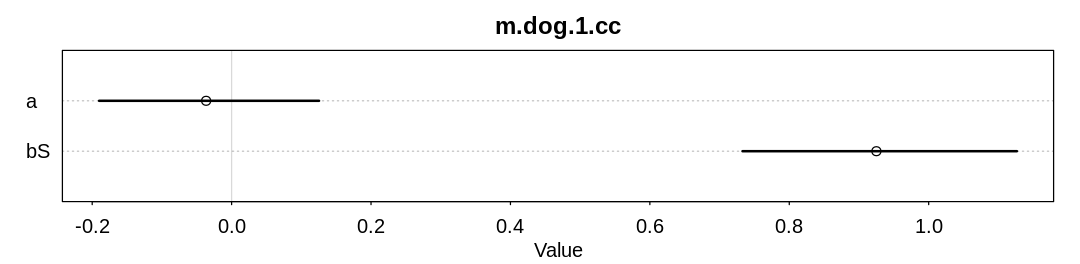

15M6. Return to the four dog-eats-homework missing data examples. Simulate each and then fit one or more models to try to recover valid estimates for \(S \rightarrow H\).

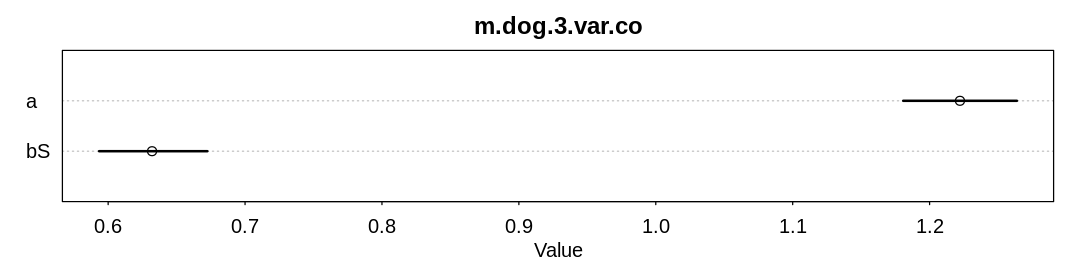

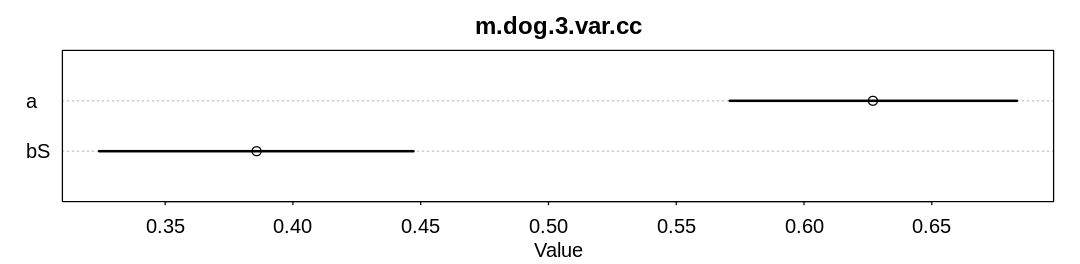

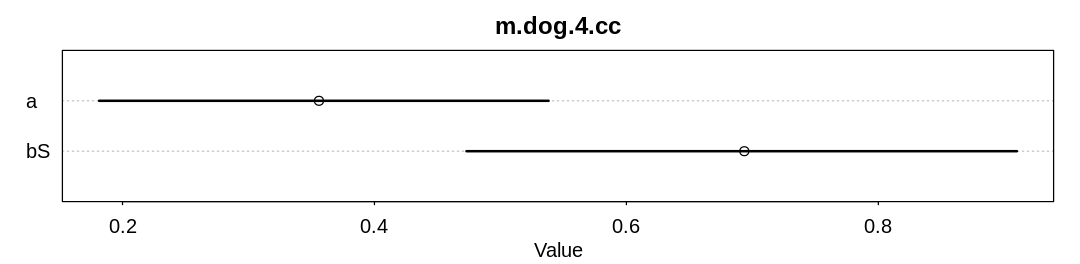

Answer. A model fit to completely observed (co) data and a model fit to complete cases (cc)

for the first scenario, where dogs eat homework at random:

library(data.table)

N <- 100

S <- rnorm(N)

H <- rbinom(N, size = 10, inv_logit(S))

D <- rbern(N) # dogs completely random

Hm <- H

Hm[D == 1] <- NA

d <- data.frame(S = S, H = H, Hm = Hm)

check_co <- function(d, name) {

set.seed(27)

dat.co <- list(

S = d$S,

H = d$H

)

m.dog.co <- ulam(

alist(

H ~ dbinom(10, p),

logit(p) <- a + bS*S,

a ~ dnorm(0, 1),

bS ~ dnorm(0, 1)

), cmdstan = TRUE, data=dat.co, cores=4, chains=4

)

display(precis(m.dog.co, depth=2), mimetypes="text/plain")

iplot(function() {

plot(precis(m.dog.co, depth=2), main=name)

}, ar=4.0)

}

check_co(d, "m.dog.1.co")

check_cc <- function(d, name) {

# browser()

set.seed(27)

d_cc <- d[complete.cases(d$Hm), ]

# t_d <- transpose(d_cc)

# colnames(t_d) <- rownames(d_cc)

# rownames(t_d) <- colnames(d_cc)

# display(t_d)

dat.cc <- list(

S = d_cc$S,

Hm = d_cc$Hm

)

display_markdown("Sample of data remaining for 'complete case' analysis: ")

display(head(d_cc))

m.dog.cc <- ulam(

alist(

Hm ~ dbinom(10, p),

logit(p) <- a + bS*S,

a ~ dnorm(0, 1),

bS ~ dnorm(0, 1)

), cmdstan = TRUE, data=dat.cc, cores=4, chains=4

)

display(precis(m.dog.cc, depth=2), mimetypes="text/plain")

iplot(function() {

plot(precis(m.dog.cc, depth=2), main=name)

}, ar=4.0)

}

check_cc(d, "m.dog.1.cc")

Warning in '/tmp/RtmpjPjDJw/model-1215642add.stan', line 2, column 4: Declaration

of arrays by placing brackets after a variable name is deprecated and

will be removed in Stan 2.32.0. Instead use the array keyword before the

type. This can be changed automatically using the auto-format flag to

stanc

mean sd 5.5% 94.5% n_eff Rhat4

a -0.00386812 0.07272701 -0.1179536 0.1137859 1440.388 0.9994012

bS 1.01038932 0.08952928 0.8723994 1.1575217 1035.581 1.0002041

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 1 finished in 0.1 seconds.

Chain 2 finished in 0.1 seconds.

Chain 3 finished in 0.1 seconds.

Chain 4 finished in 0.1 seconds.

All 4 chains finished successfully.

Mean chain execution time: 0.1 seconds.

Total execution time: 0.2 seconds.

Sample of data remaining for ‘complete case’ analysis:

| S | H | Hm | |

|---|---|---|---|

| <dbl> | <int> | <int> | |

| 3 | -0.3483160 | 6 | 6 |

| 5 | 0.2841523 | 5 | 5 |

| 8 | 0.3812747 | 5 | 5 |

| 10 | 0.3922908 | 8 | 8 |

| 13 | 0.1487904 | 8 | 8 |

| 16 | 1.1348391 | 9 | 9 |

Warning in '/tmp/RtmpjPjDJw/model-1230488a32.stan', line 2, column 4: Declaration

of arrays by placing brackets after a variable name is deprecated and

will be removed in Stan 2.32.0. Instead use the array keyword before the

type. This can be changed automatically using the auto-format flag to

stanc

mean sd 5.5% 94.5% n_eff Rhat4

a -0.03654073 0.09791599 -0.1900768 0.1254365 1339.579 1.001207

bS 0.92498400 0.12369701 0.7329099 1.1265327 1116.149 1.000748

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 1 finished in 0.1 seconds.

Chain 2 finished in 0.1 seconds.

Chain 3 finished in 0.1 seconds.

Chain 4 finished in 0.1 seconds.

All 4 chains finished successfully.

Mean chain execution time: 0.1 seconds.

Total execution time: 0.2 seconds.

As expected (explained in the chapter) we are able to infer bS both with and without all the data.

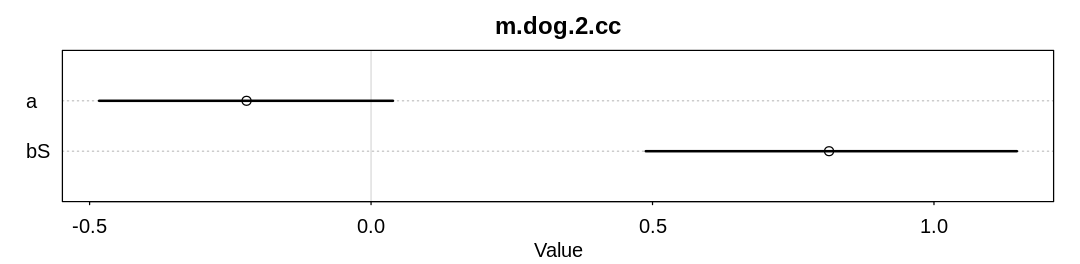

In the second scenario, dogs of hard-working students eat homework. We don’t need to fit a new

completely observed (co) model because it shouldn’t be any different from m.dog.1.co. A model

fit to complete cases (cc):

D2 <- ifelse(S > 0, 1, 0)

Hm2 <- H

Hm2[D2 == 1] <- NA

d <- data.frame(S = S, H = H, Hm = Hm2)

check_cc(d, "m.dog.2.cc")

Sample of data remaining for ‘complete case’ analysis:

| S | H | Hm | |

|---|---|---|---|

| <dbl> | <int> | <int> | |

| 2 | -1.29318475 | 2 | 2 |

| 3 | -0.34831602 | 6 | 6 |

| 4 | -0.35097548 | 2 | 2 |

| 20 | -1.44522679 | 2 | 2 |

| 21 | -0.68131726 | 2 | 2 |

| 24 | -0.04805382 | 6 | 6 |

Warning in '/tmp/RtmpjPjDJw/model-1247d6a9ed.stan', line 2, column 4: Declaration

of arrays by placing brackets after a variable name is deprecated and

will be removed in Stan 2.32.0. Instead use the array keyword before the

type. This can be changed automatically using the auto-format flag to

stanc

mean sd 5.5% 94.5% n_eff Rhat4

a -0.2211787 0.1625756 -0.4830884 0.03894572 567.4133 1.007038

bS 0.8135978 0.2028660 0.4879648 1.14727990 421.4382 1.008410

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 4 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 4 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 4 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 4 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 1 finished in 0.1 seconds.

Chain 2 finished in 0.1 seconds.

Chain 3 finished in 0.1 seconds.

Chain 4 finished in 0.1 seconds.

All 4 chains finished successfully.

Mean chain execution time: 0.1 seconds.

Total execution time: 0.2 seconds.

As expected (explained in the chapter) we are able to infer bS both with and without all the data.

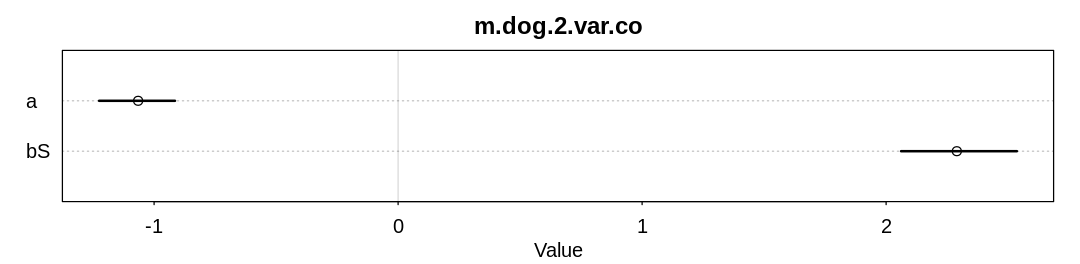

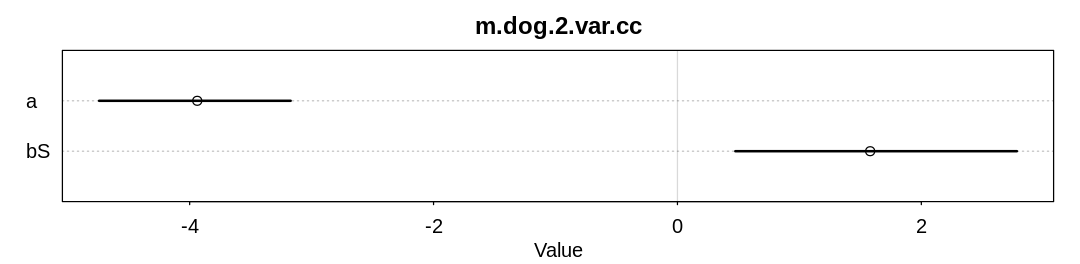

The text suggests a variation on scenario 2 where the function \(S \rightarrow H\) is nonlinear and unobserved only in the domain of the function where it is non-linear. Let’s simulate this scenario and attempt to infer the coefficient of the (wrong) linear function with and without all the data:

H3 <- ifelse(S > 0, H, 0)

Hm3 <- H3

Hm3[D2 == 1] <- NA

d <- data.frame(S = S, H = H3, Hm = Hm3)

check_co(d, "m.dog.2.var.co")

check_cc(d, "m.dog.2.var.cc")

Warning in '/tmp/RtmpjPjDJw/model-127154fd95.stan', line 2, column 4: Declaration

of arrays by placing brackets after a variable name is deprecated and

will be removed in Stan 2.32.0. Instead use the array keyword before the

type. This can be changed automatically using the auto-format flag to

stanc

mean sd 5.5% 94.5% n_eff Rhat4

a -1.065430 0.09883981 -1.225324 -0.9143453 983.0190 1.001233

bS 2.289743 0.14768992 2.061293 2.5359345 849.6928 1.001852

Running MCMC with 4 parallel chains, with 1 thread(s) per chain...

Chain 1 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 1 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 1 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 1 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 1 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 1 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 1 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 1 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 1 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 1 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 1 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 1 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 2 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 2 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 2 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 2 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 2 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 2 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 2 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 2 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 2 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 2 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 2 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 2 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 3 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 3 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 3 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 3 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 3 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 3 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 3 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 3 Iteration: 600 / 1000 [ 60%] (Sampling)

Chain 3 Iteration: 700 / 1000 [ 70%] (Sampling)

Chain 3 Iteration: 800 / 1000 [ 80%] (Sampling)

Chain 3 Iteration: 900 / 1000 [ 90%] (Sampling)

Chain 3 Iteration: 1000 / 1000 [100%] (Sampling)

Chain 4 Iteration: 1 / 1000 [ 0%] (Warmup)

Chain 4 Iteration: 100 / 1000 [ 10%] (Warmup)

Chain 4 Iteration: 200 / 1000 [ 20%] (Warmup)

Chain 4 Iteration: 300 / 1000 [ 30%] (Warmup)

Chain 4 Iteration: 400 / 1000 [ 40%] (Warmup)

Chain 4 Iteration: 500 / 1000 [ 50%] (Warmup)

Chain 4 Iteration: 501 / 1000 [ 50%] (Sampling)

Chain 4 Iteration: 600 / 1000 [ 60%] (Sampling)